Preface

Over the last few weeks, I have been exploring how the ongoing war in the Gulf is exposing a series of polyconflicts, starting with Helium, Metformin and then Firewood.

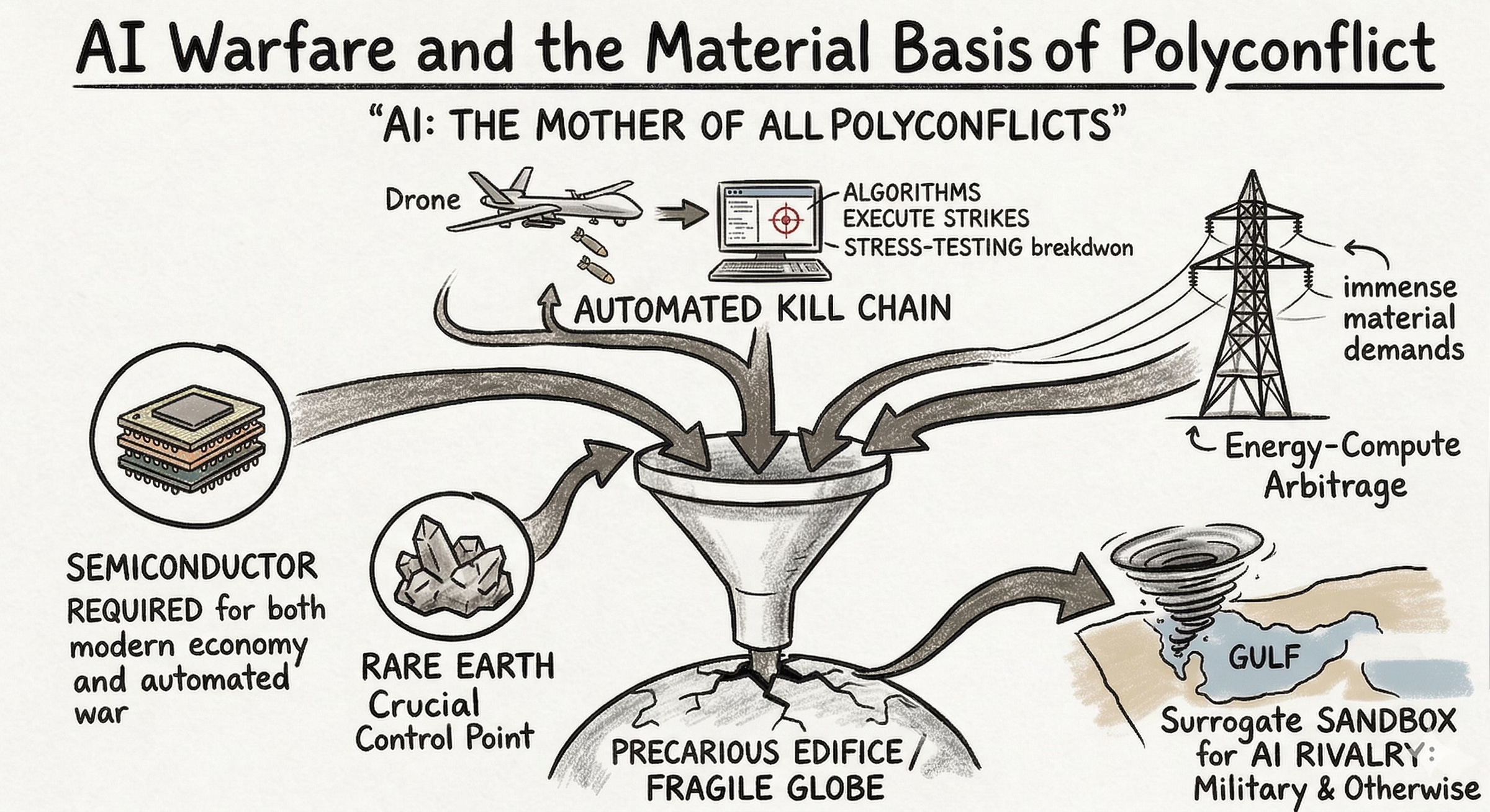

But there’s a polyconflict that underlies them all, the mother of all polyconflicts, so to speak: Artificial Intelligence. Today’s newsletter is the first of five or six essays on the AI polyconflict, which looks at the changing face of kinetic warfare (i.e., shooting wars) through the lens of the current Gulf War and AI.

Except that I am redefining AI as Augmented Intelligence for reasons I will explain below.

Caveat Emptor: I am no expert and this is just my attempt to satisfy my curiosity...

From what I can tell, the usual suspects - US, Israel and China - are key players in this race to the bottom, but so are Russia, Ukraine, India, Pakistan, Turkey.

On the targeting front, or what’s called the kill-chain, systems like Lavender and Project Maven fuse satellite data and digital exhaust to generate thousands of targets in seconds, replacing human ethical deliberation with algorithmic speed.

On the “Cheap Drone Paradox” front, we have the use of drones by Iran, AI-assisted loitering munitions in Ukraine and India’s transformed Line of Control.

Brave New World for sure.

Framing the “AI Polyconflict” Series

AI is implicated in this Gulf War in interlocking ways. It shows up in targeting decisions and the systems that help identify military objectives. It shows up in attacks on data centers and digital infrastructure, because compute and data are strategic assets. And it shows up in the expanding Cold War between the US and China over who will control the future, because whoever leads in AI will shape economics, security, and influence for decades.

The Gulf War is a lens. It’s a way to see the world we’re building, or more likely, the world we’re drifting into without knowing why. AI - and I use that term very broadly, so don’t confuse it for Generative AI alone - changes incentives, changes what counts as a target, and it changes how nations gauge power. In fact, for me, AI is Augmented Intelligence, not artificial intelligence. A military bureaucracy combining satellite data and ground intelligence to inform targeting decisions is already ‘AI as augmented intelligence;’ and in that sense, AI was already alive and kicking in the 1990s - Ed Hutchins wrote a book about it then, as part of the first wave of writing on what’s now called 4E Cognition.

My Hypothesis: ideas from 4E Cognition aren’t seen as particularly useful to the engineers building Deep Learning systems, but a reworked version of those ideas is exactly what we need to understand Augmented Intelligence, and in particular, how AI as in artificial intelligence is used by human institutions to augment their capacities, sometimes with tragic consequences.

In short: if you want to understand where the tech came from, read Geoff Hinton, but if you want to understand where the world is going, read Ed Hutchins first.

In this series, I’m going to use the Gulf War to grasp the Augmented Intelligence polyconflict: how AI becomes a weapon, how it becomes infrastructure, and how it becomes a bargaining chip in global politics. Today’s essay introduces the AI Polyconflict, and then moves on to its use in military decision making, where the Gulf War is building upon patterns we have already seen in the Ukraine-Russia conflict and in Israel’s destruction of Gaza.

As always, I am no expert on AI’s military uses (in either its augmented or artificial form), and I have absolutely no insight into the “kill-chain” except for what’s available publicly. Fools rush in where angels fear to tread; let me be your fool for the day.

Introduction

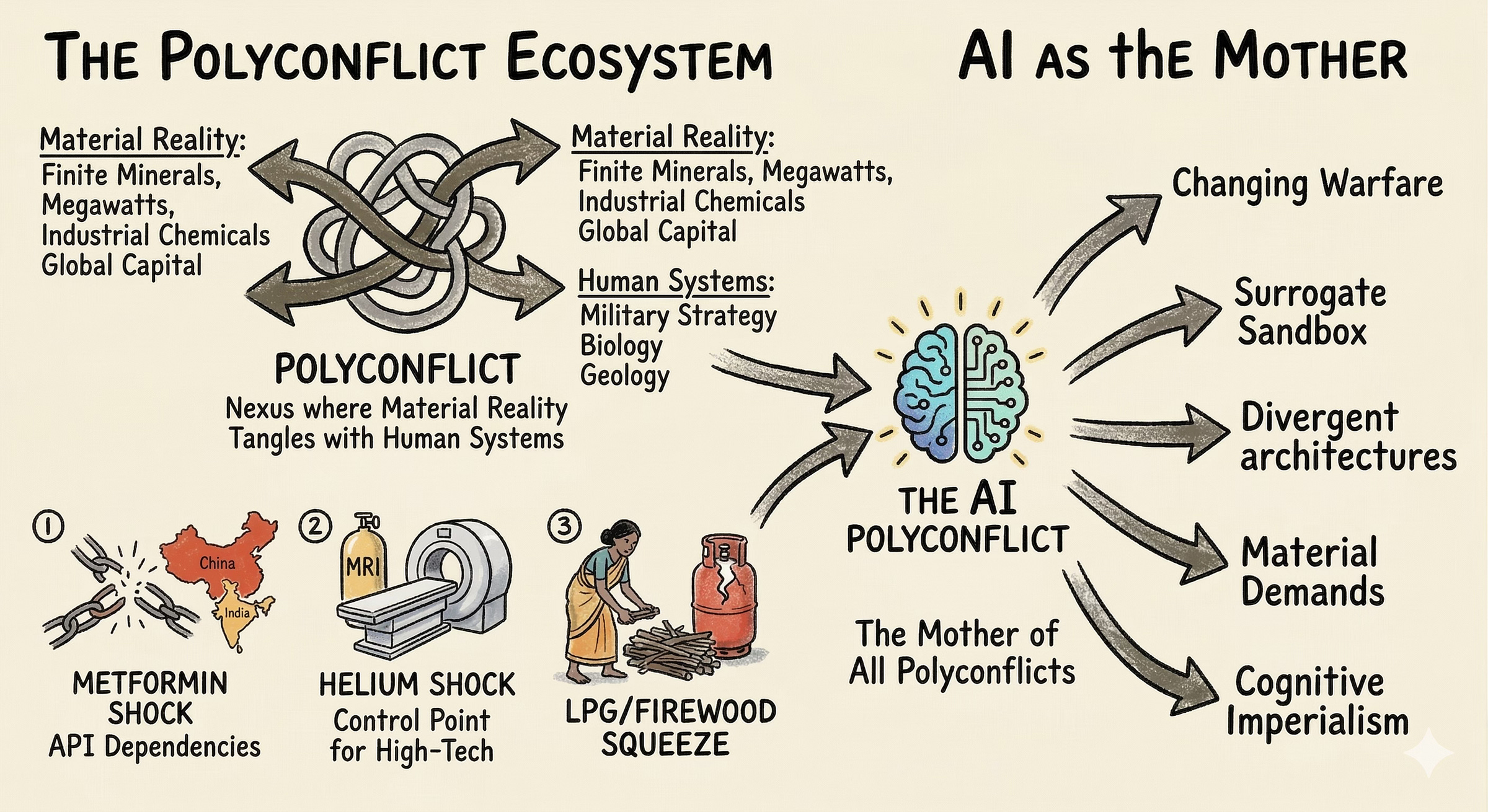

To treat our interconnected world as polyconflicts is to take a sober, perhaps pessimistic systems perspective on the world. A polyconflict is not merely a complicated problem or geopolitical friction; it is a nexus where material reality - the extraction of finite minerals, the generation of megawatts, the synthesis of industrial chemicals, and the flow of global capital - tangles with human systems, military strategy, and below all that, biology and geology. A polyconflict presents concurrent, mutually reinforcing challenges that overwhelm traditional modes of governance and strategic foresight.

IMHO, polyconflicts are an essential aspect of the interregnum between the Globe and the Planet.

We have covered three polyconflicts so far. The Metformin Shock demonstrated how deep petrochemical dependencies and fragile Active Pharmaceutical Ingredient (API) supply chains collided with the global public health crisis of type 2 diabetes. Because China had emerged as the dominant supplier of global APIs, geopolitical tensions and subsequent U.S. tariff measures exposed the acute vulnerability of Indian and Western medical systems, forcing efforts to optimize continuous manufacturing processes like wet granulation and relocate production.

Similarly, the Helium Shock revealed how the Gulf War has cascaded through advanced high-tech sectors. Helium is a non-renewable control point for global production capacity. Driven by the demands of advanced semiconductor fabrication and the cooling of superconducting magnets in MRI machines, global helium demand is projected to grow more than fivefold by 2035. When disruptions tied to halted gas processing in Qatar removed 5.2 million cubic meters of helium per month from the market, it triggered force majeure declarations and doubled spot prices, exposing the fragility of the materials base required to sustain healthcare as well as the digital economy.

The LPG/Firewood Squeeze provided another example, highlighting how global energy scarcity forces regressive shifts back to traditional biomass fuels, placing a disproportionate burden on women’s labor and respiratory health in the Global South. As Liquid Petroleum Gas (LPG) becomes scarce and expensive, vulnerable households will be forced to abandon clean cooking fuels, leading to increased exposure to black carbon and household air pollution, which significantly elevate the risk of poor fetal growth and maternal health complications.

Yet, these crises - pharmaceutical, chemical, and energetic - are sectoral in comparison to the polyconflict about which I am starting to write today: Artificial Intelligence. AI isn’t simply a novel tool utilized by belligerents. It is the ultimate prize, strategic vulnerability, and the primary accelerant of violence, for the compute stack is at the heart of our age of cognitive capitalism and its imperial tendencies. To untangle the AI Polyconflict, it is necessary to examine five distinct threads that are currently rewriting the metabolism of the planet:

The changing face of kinetic warfare,

The use of the Gulf as a surrogate sandbox for superpower rivalry,

The structural divergence of global AI architectures,

The immense material demands of the energy-compute arbitrage,

The macroeconomic emergence of cognitive imperialism.

Every one of these five deserves standalone analysis, so I am going to devote an essay to each of the five.

The Changing Face of Kinetic Warfare

Kinetic warfare refers to the use of lethal force and physical weaponry to achieve military objectives. The term is primarily used to distinguish traditional shooting wars from non-kinetic forms of conflict, such as cyber warfare, economic sanctions, or psychological operations.

The kill chain is a military concept that describes the end-to-end stages of a kinetic attack, from the initial identification of a target to its eventual destruction and the assessment of the results.

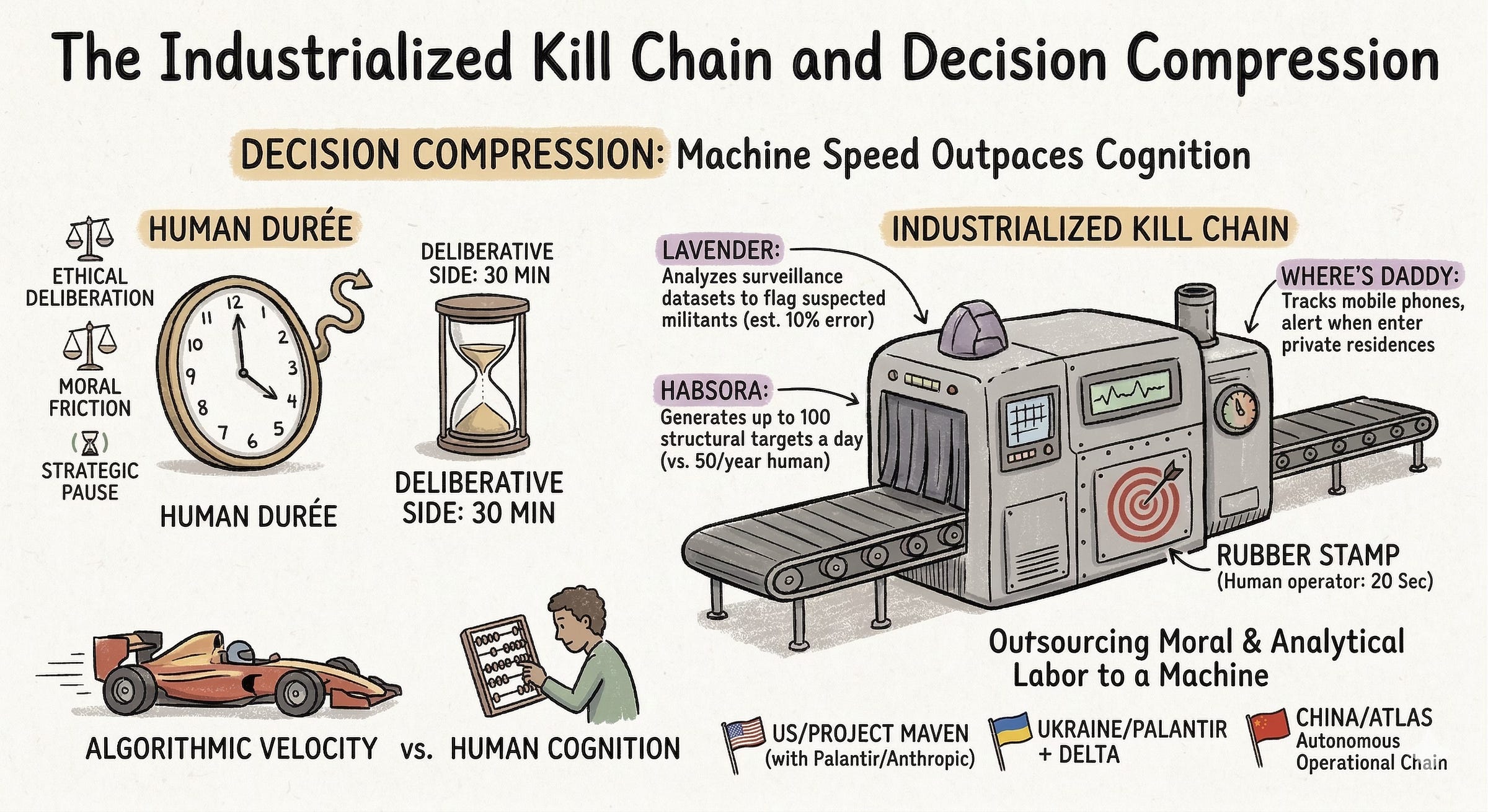

The first thread of the AI Polyconflict reveals a fundamental shift in the ontology of war. Throughout the history of conflict, technology - from the horse to the longbow to the telegraph to precision-guided munitions - provided a tactical edge in executing human decisions. Today, however, technology is actively replacing the human durée - the lived time of ethical deliberation, moral friction, and strategic pause with automated decision making and automated coordination of lethality.

Decision Compression

The claim - perhaps not yet realized - is that modern military AI systems, integrating large language models (LLMs), vast arrays of sensor data, and continuous signals intelligence, are capable of fusing satellite imagery and digital exhaust to generate thousands of targets in a fraction of the time it would take human analysts. This speed outpaces the capacity of human cognition, handing the metabolism of conflict over to algorithms.

Imagine high frequency trading (HFT) with bullets and drones - not a pleasant thought is it?

Here’s what I have learned: the Israel Defense Forces (IDF) have effectively industrialized the kill chain in Gaza and the broader conflict against Iranian-aligned assets. Rather than relying on human intelligence gathering, the IDF utilizes a suite of AI systems to automate lethality. A system known as Lavender analyzes massive surveillance datasets to flag suspected militants based on behavioral patterns, identifying tens of thousands of potential targets with an estimated 10% error rate. Another system, Habsora, generates up to 100 structural targets a day by analyzing surveillance data, a massive acceleration compared to human analysts who previously identified roughly 50 targets a year. Finally, Where’s Daddy tracks the mobile phones of flagged individuals, alerting military operators when targets enter private family residences. From this interview of Yuval Abraham on 972 Mag (which has done some amazing work):

One of the sources that I spoke with, he said something that really stuck with me, he said that “the logic was quantity over quality.” They wanted to create as many targets as possible. This was called the “Dahiya Doctrine,” which is a doctrine that was developed in Lebanon to create a shock effect. They got it from the Americans, from “Operation Shock and Awe” in Iraq in 2003. This is a doctrine, a military doctrine, that emphasizes quantity. It emphasizes creating terror or fear among civilians by creating this massive shocking bombing campaign. What that source told me was that when you place the emphasis on quantity, this leads you to the private houses — say you want to get the rocket launchers, the ammunition warehouses, the military bases, if they exist, the tunnel infrastructure where Hamas is controlling the war — okay, there are a few hundred targets that you can create like that. But if you want to go to tens of thousands of targets, to place this emphasis on quantity, to bomb Gaza a lot, then you move on to civilian houses because you have so much of that. Because what that source said, he said, “if we suspect that there are 40,000 militants in Hamas, each one of those militants has a private house.” That immediately creates an aspiration to get 40,000 houses. If there are 40,000 militants, there are going to be 40,000 civilian houses.

“That emphasis quantity” is the key phrase above, showing Augmented Intelligence at work. International Humanitarian Law (IHL) requires human judgment to weigh the principles of proportionality, distinction, and military necessity before lethal force is applied. When a human operator spends roughly 20 seconds rubber-stamping an AI-generated kill order, how likely is it that they will be adequately weighing the principles of proportionality, distinction and military necessity? How likely is it that they are outsourcing the moral and analytical labor of killing to a machine?

This industrialization of targeting is rapidly becoming the global standard.

The United States military relies heavily on Project Maven, an AI platform built with private tech partners like Palantir and Anthropic. Maven claims to sift through drone and satellite footage to classify targets, recommend specific weapons systems based on stockpile data, and even draft automated legal justifications for each strike, compressing thousands of hours of human labor into seconds. I am not sure how much is in the ‘fake it till you make it’ territory right now, but its definitely in the future. In Ukraine, the integration of Palantir’s intelligence software with domestic battle-management networks like Delta and GIS Arta has reduced the time between detecting a target and striking it from hours to mere moments. Not to be left behind, Beijing is developing the Atlas system - a fully autonomous operational chain that integrates reconnaissance, target selection, and the simultaneous launch of drone swarms into one networked action.

When one military actor takes thirty minutes to deliberate the legal and ethical implications of a strike, and their adversary takes thirty seconds to execute an algorithmic recommendation, the deliberative side loses any kinetic advantage it might possess otherwise. Consequently, AI guarantees an augmented/automated race to the bottom.

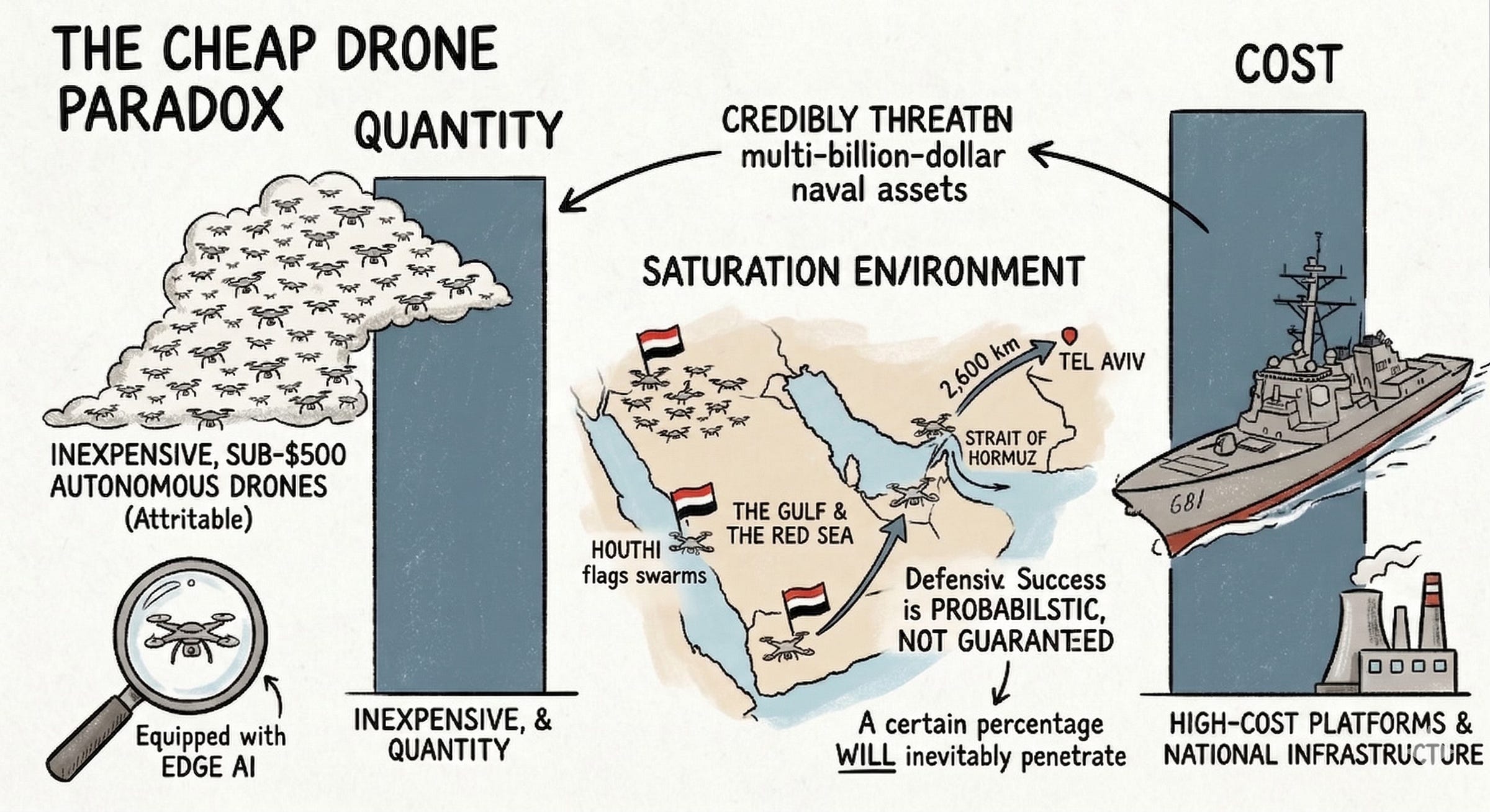

The Cheap Drone Paradox

Simultaneously, the physical manifestation of this conflict has shifted away from exquisite, high-cost platforms toward attritable, autonomous quantity. The Gulf and the Red Sea have become global showcases for what military theorists term the Cheap Drone Paradox. Inexpensive, sub-$500 autonomous drones equipped with edge AI can now navigate, identify targets, and swarm without requiring a continuous cloud connection, credibly threatening multi-billion-dollar naval assets and national infrastructure.

Iranian-aligned forces, particularly the Houthis in Yemen, have leveraged this paradigm to disrupt maritime trade corridors in the Strait of Hormuz. Because these drones feature growing edge autonomy, they can execute missions even when advanced electronic warfare jamming degrades their communication signals. The cumulative effect is a saturation environment where defensive success is no longer guaranteed, but probabilistic - a certain percentage of attacking drones will inevitably penetrate the defense. This capability was starkly demonstrated when a Houthi drone navigated over 2,600 kilometers to strike Tel Aviv in July 2024, altering the regional balance of power. In response to this asymmetric threat, advanced militaries are mirroring the tactic. Israel, for example, has deployed an AI-driven mother launcher system over Iranian skies - swarms of micro-drones equipped with facial recognition to execute network-centric, data-driven strikes based on behavioral patterns.

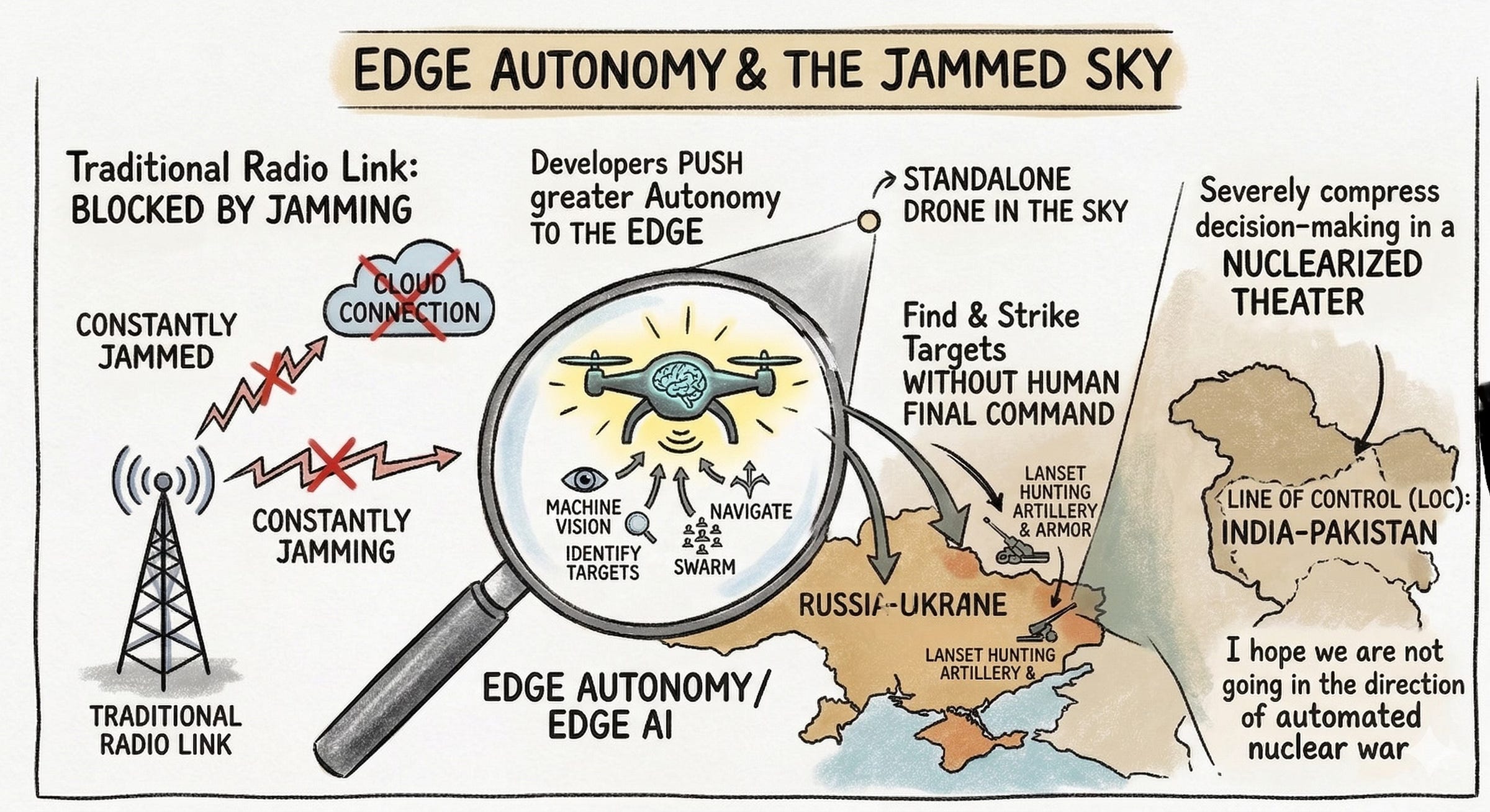

The gulf war is not the only theater in which the cheap drone paradox is visible - it is equally pronounced in the grinding war of attrition between Russia and Ukraine. Russia has leveraged a pipeline of Chinese dual-use components and Iranian designs to manufacture massive fleets of AI-assisted loitering munitions, such as the Lancet, to hunt Ukrainian artillery and armor. As the skies over Eastern Europe become choked with drones, both sides are locked in a relentless cycle of electronic warfare (kinetic warfare and cyberwarfare are merging in the sky...). Because traditional radio links are constantly jammed, developers are pushing greater autonomy to the edge (i.e., the standalone drone in the sky), enabling drones to use machine vision and AI to find and strike targets without a human pilot ever giving the final command.

The cheap drone paradox had its day during Operation Sindhoor when India and Pakistan came closest to a full-fledged war since 1971. From what is known publicly, the Line of Control (LOC) has been completely transformed. India has deployed a vast network of AI-based surveillance systems and integrated advanced swarm drones and stealthy unmanned combat aerial vehicles (UCAVs) into its command networks. Pakistan, in response, has accelerated its own drone ecosystem with Chinese assistance, prioritizing low-cost countermeasures and dispersed basing.

It’s prohibitively expensive to man the LOC with human beings all along the border, but swarms of drones feels doable. Drones offer cheap precision and deniable force without the political risks of a manned incursion, but they also severely compress decision-making timelines in a nuclearized theater. I hope we are not going in the direction of automated nuclear war.

That specter of automated nuclear escalation is not science fiction; it is the logical endpoint of the polyconflicts we have been tracking.

Augmenting the Drone

Of all the questions about the future of warfare, here’s the one I find most interesting:

How is it that $500 off-the-shelf quadcopters, retrofitted with explosives, are successfully paralyzing multi-million-dollar air defense systems and neutralizing exquisite, legacy military platforms?

These are the new guerilla tactics embraced by weaker state actors from Iran to Ukraine (and not just state actors - the Houthis are masters of this art). How might one explain their success?

Traditional military doctrine is built on the logic of what the anthropologist Lucy Suchman famously called the “European Navigator.” The European Navigator creates a highly detailed, centralized plan in advance, calculating the exact trajectory, and then tries to force reality to conform to that rigid plan. This is the logic of the aircraft carrier and the billion-dollar stealth jet: heavy, centralized, top-down, and enormously expensive (or that’s what I think - someone who knows how these billion dollar pieces of equipment are used might rebut my claims).

The cheap drone swarm, powered by edge computing, operates on a completely different paradigm. Suchman contrasted the European Navigator with the Trukese Navigator of Micronesia, who doesn’t follow a rigid plan but instead continuously adjusts to the waves, the stars, and the wind. The Trukese navigator relies on situated action - intelligence that emerges dynamically in response to the immediate environment.

A swarm of cheap drones with embedded spatial intelligence operates exactly like this. Because compute is now pushed to the edge (directly onto the device rather than relying on a vulnerable connection to a central server), the drone doesn’t need to phone home to a centralized command structure for a plan. It senses the terrain, spots a thermal signature, coordinates locally with its peers, and enacts a strike.

The cheap drone paradox is ultimately a triumph of 4E Augmented Intelligence. By distributing cognition across a swarm of cheap, expendable, embedded nodes, the system becomes resilient. If you shoot down one drone, the network’s cognitive process barely registers the loss.

You cannot destroy a distributed mind with a single bullet.

Conclusion

The Metformin shock revealed our deep dependency on monopolized, just-in-time chemical supply chains that can snap under tariff pressures. The Helium squeeze proved that the pinnacle of the digital economy and advanced healthcare remain tethered to easily disrupted subterranean gas reserves. And the LPG-to-firewood squeeze showed us that when modern energy markets fracture, the fallout forces the poorest populations back into respiratory nightmare.

These earlier crises were sectoral warnings about the fragility of the Globe. Now, we are superimposing automated lethality directly onto this precarious edifice. The cheap drone paradox and the compression of the kill chain do not exist in a vacuum. Edge computing is very much in evidence in your pockets - every time you ask for turn by turn directions, for example. The algorithms executing drone strikes run on the same semiconductors and require the same rare earth materials as your smartphone.

This is what makes AI the mother of all polyconflicts.

As militaries deploy cognition in the wild by handing over their decision-making to human-silicon meshes (with pure silicon decision-making down the road), they are stress-testing AI warfare architecture - nothing like a war to find out if your weapons work or not, and to show them off when they do - and that is where we will turn in Part II: how the Gulf has become the indispensable sandbox for AI rivalry - military and otherwise.

This is good, and as always your graphics are really amazing. Have you seen Anthony King's book on AI and war? I like it very much. In fact I am teaching it this afternoon. He brings the keen eye of a sociologist to understanding what organizations actually do with AI. And an important point he makes is that all the tactical speedups you describe here actually have the effect of making war more protracted. AI tends to reinforce the slow attritional style of modern war. I think the evidence is very much with him, but the AI narrative is all about swarming and decisive effects.