Preface

In Part II, I argued that Augmented Intelligence in Society - the thing we should actually be paying attention to when we talk about AI, unless you’re a machine learning researcher or engineer - should be understood as a merger of two ideas: distributed cognition (from the 4E Cognition tradition) and political technology (from Michel Foucault and his successors). Militaries have always been artificial intelligences, vast distributed cognitive systems that format human bodies into standardized components and extend their capacities through material artifacts. The cybernetic military-industrial complex of the Cold War was the most consequential iteration of this political technology before our own. Contemporary AI systems are its children, carrying the congealed patterns of that complex in their statistical weights.

Part II ended with a question: what new forms of discipline and institutional design does AI require? The answer, I’m afraid, is already taking shape - and it looks less like international humanitarian law and more like a global protection racket. I want to retreat from the battlefield and look at the geopolitical architecture of the AI Polyconflict. Because the kinetic conflicts in the Gulf, in Ukraine, and along the India-Pakistan border are not strictly local disputes. They function as surrogate battlegrounds for the defining superpower rivalry of our century: the contest between the United States and China over who will control the cognitive infrastructure of the planet (read that in a deep ominous voice introducing a PBS documentary on the Second Cold War).

The Surrogate Sandbox

At the end of Part I, I wrote that militaries are stress-testing AI warfare architecture in the Gulf, and that nothing beats a war for finding out if your weapons work. That was the narrow version of the claim. The broader version is this: the Gulf has become a surrogate sandbox not just for weapons systems, but for entire civilizational models of Augmented Intelligence.

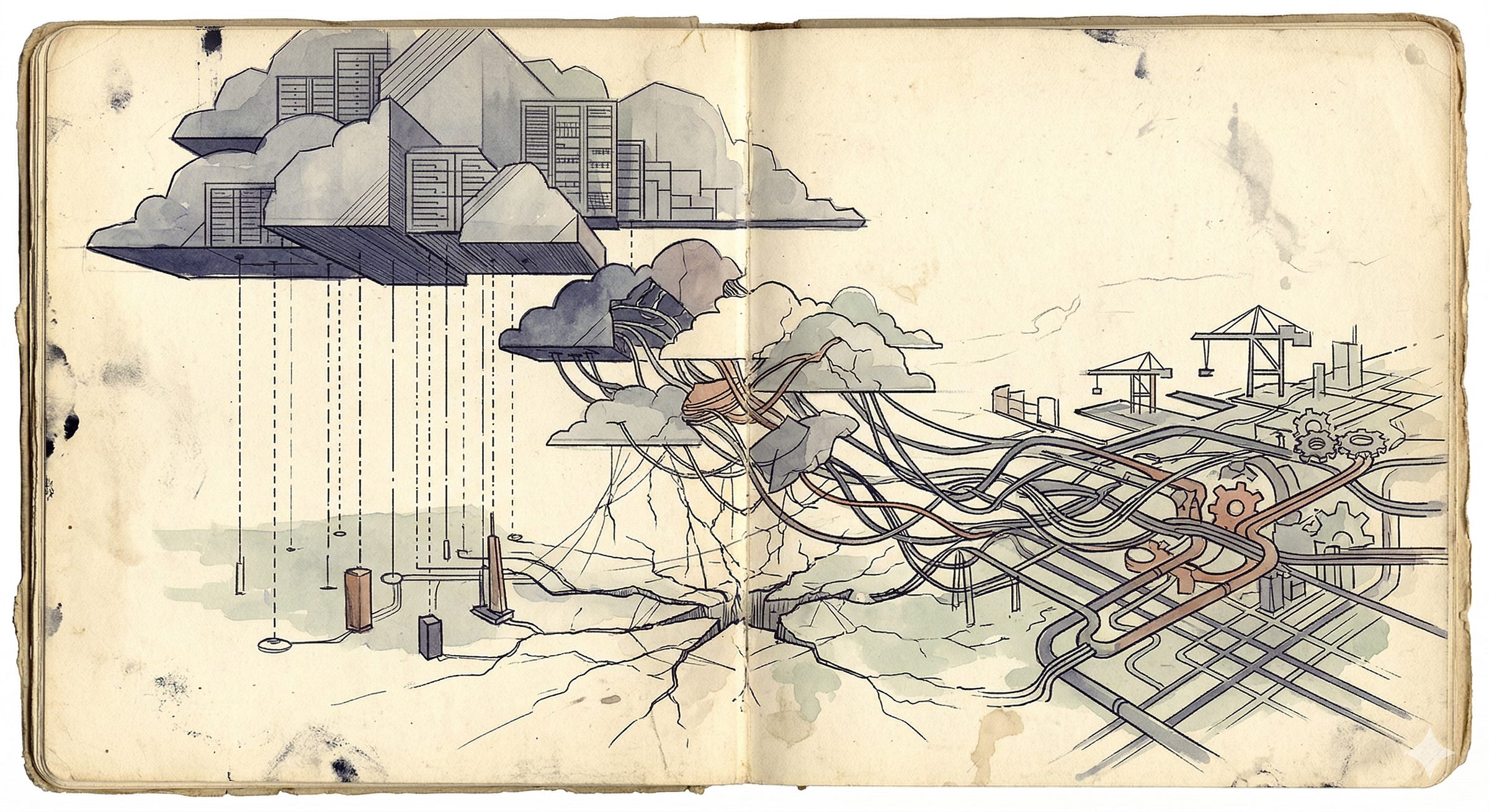

The physical geography of the Middle East and elsewhere is being inscribed by the digital geography of competing AI stacks. The United States and China are each projecting a different architecture for how human institutions should be cognitively augmented - a different vision of what the human-machine ensemble should look like at the scale of nations and economies. The Gulf, with its vast capital reserves, its cheap energy, and its active war zone, is one of those places where these competing visions collide.

To understand what’s happening here, I want to use the same framework from Part II. If Augmented Intelligence is the merger of distributed cognition and political technology, then the Stack War between the US and China is a contest between two different political technologies, each producing its own kind of augmented institutional cognition, each disciplining its client states in different ways, and each carrying the congealed patterns of the civilization that produced it.

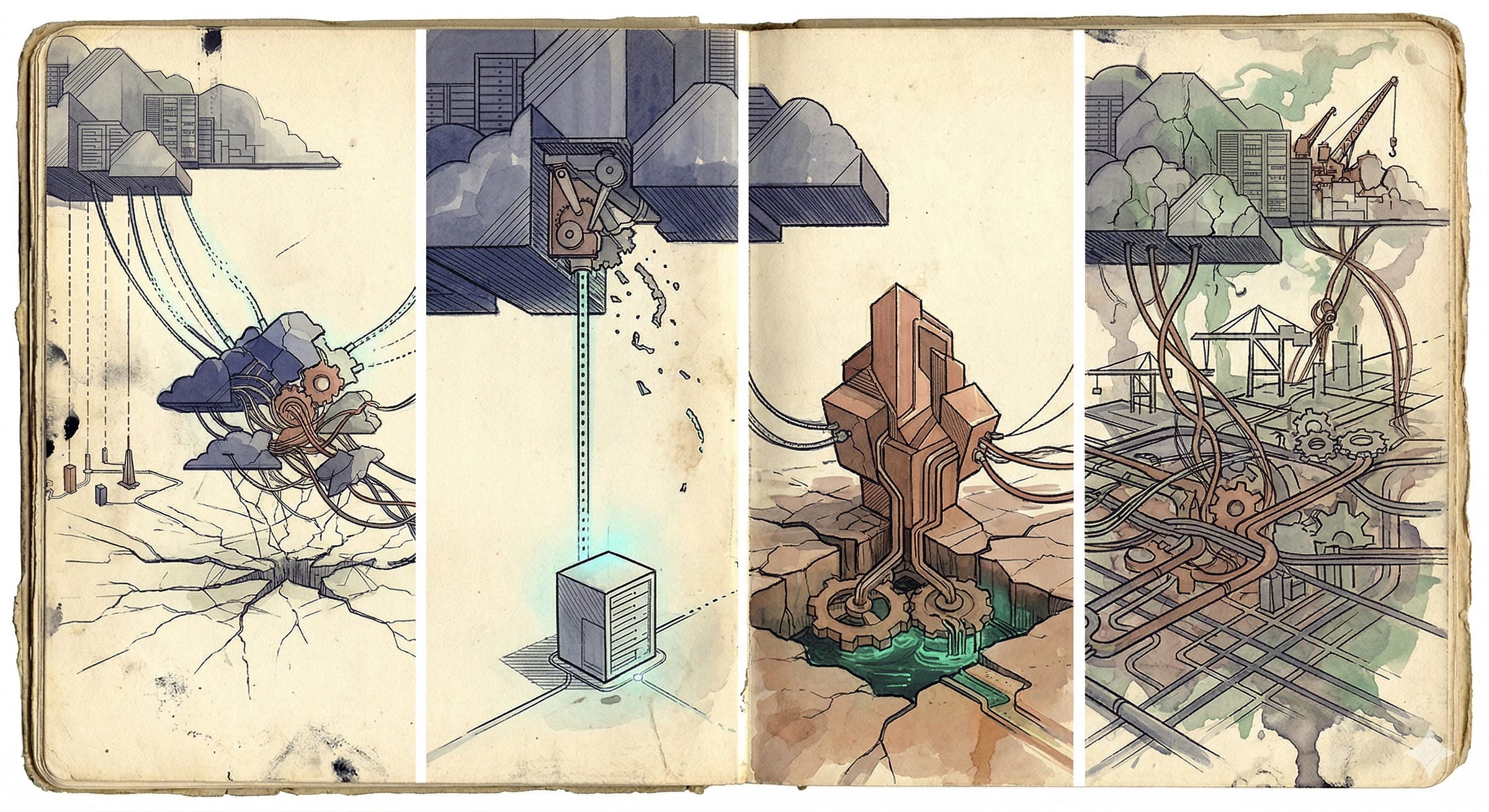

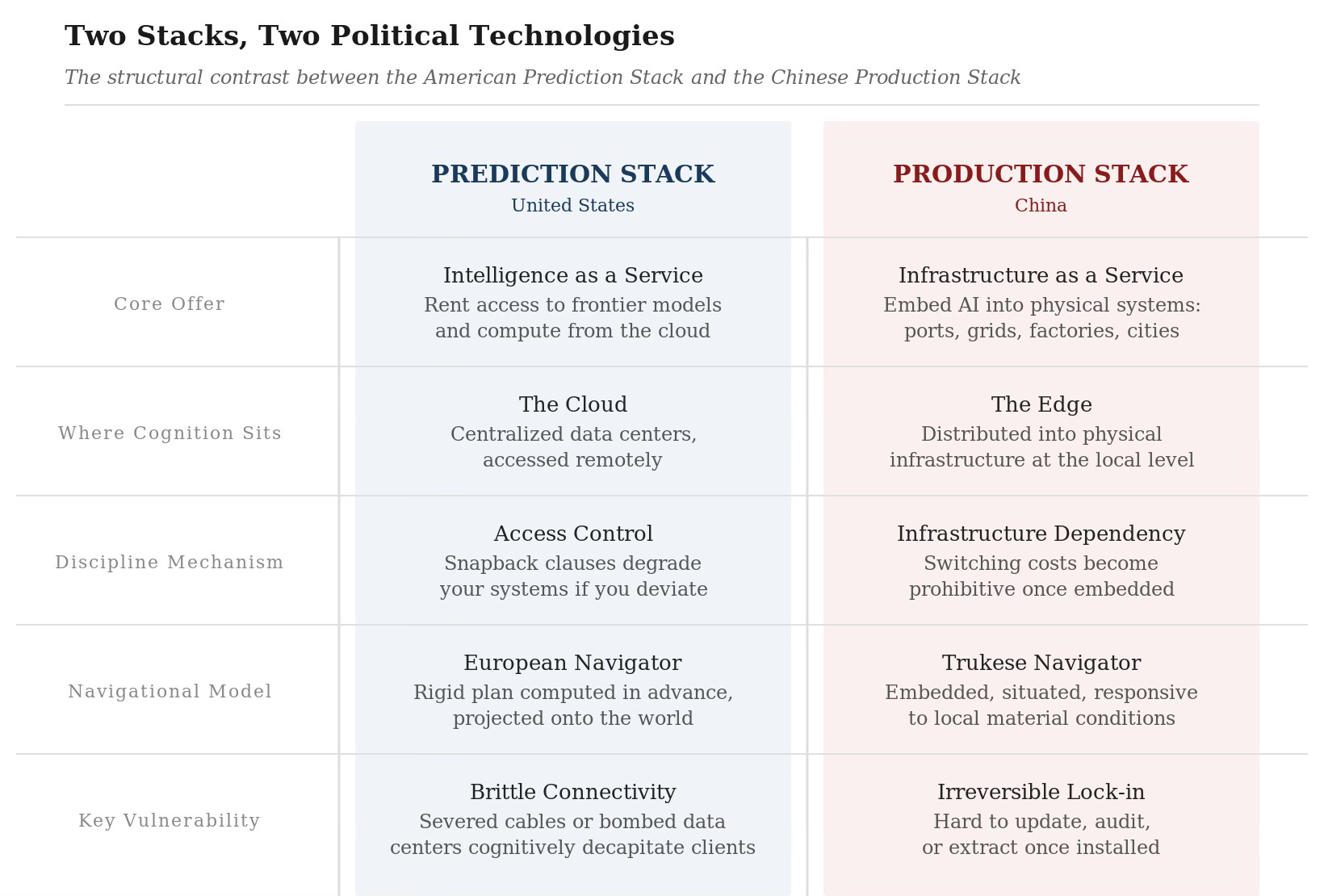

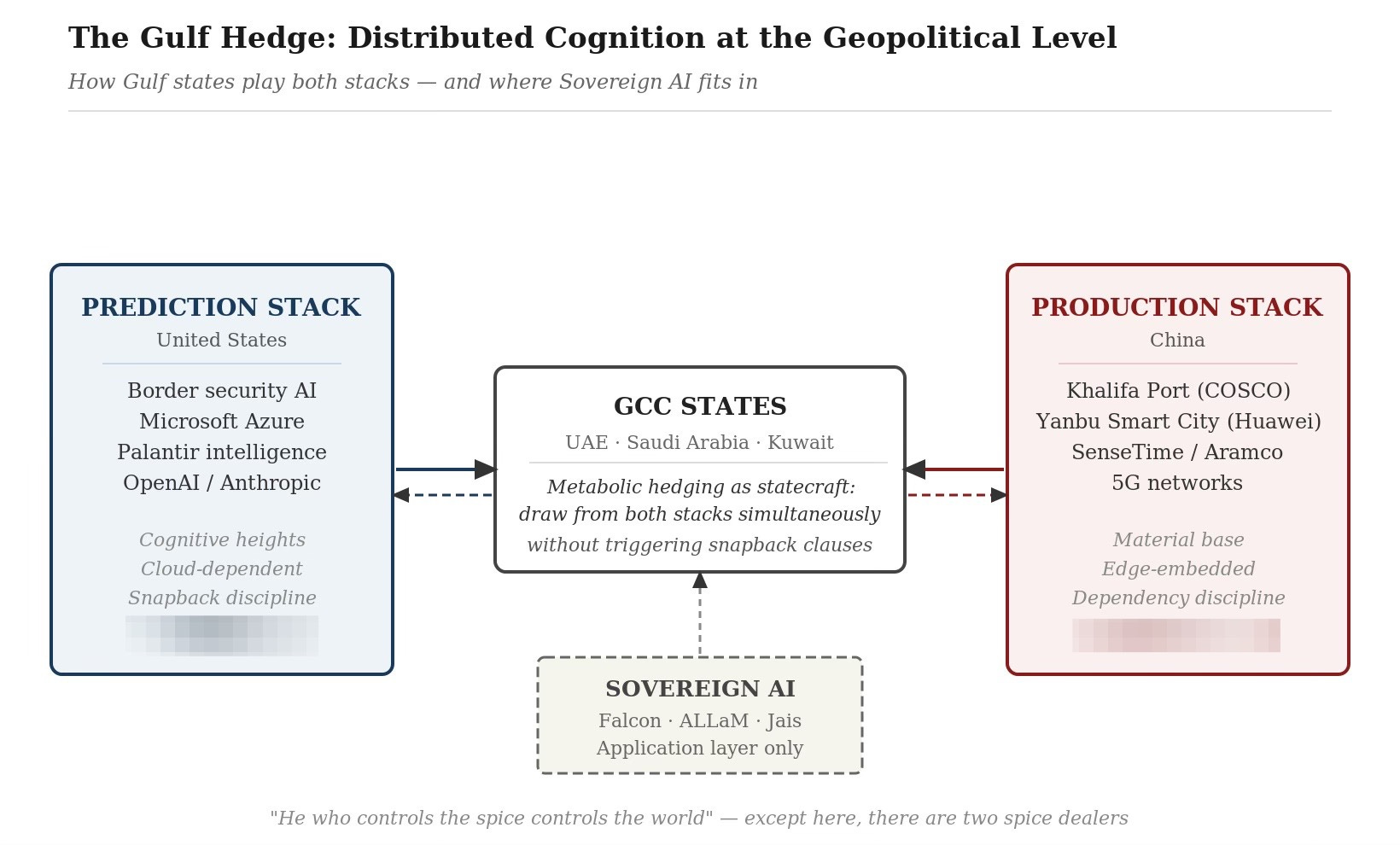

The Prediction Stack

The United States projects power through I have called the Prediction Stack: a technological ecosystem built on cutting-edge semiconductor fabrication (Nvidia GPUs above all), massive centralized cloud computing platforms (AWS, Azure, Google Cloud), proprietary foundation models (OpenAI, Anthropic, Google), and precision military hardware. This is a stack designed to dominate the cognitive heights (should remind you of commanding heights) to control the most advanced forms of computation and rent them out to the world. Washington treats data centers, cloud access, and compute licensing as instruments of power projection in the same way it once treated military bases and aircraft carrier groups. The goal is to bind allied and client states into the American technology ecosystem while systematically excluding Chinese influence.

India is very much in their sights.

The Microsoft-G42 deal is a clear example. Microsoft invested $1.5 billion in the UAE’s sovereign AI holding company, G42, as the cornerstone of a broader $15.2 billion commitment to UAE digital infrastructure. This was not an ordinary business transaction. It was orchestrated with explicit U.S. government oversight, and in exchange for access to advanced American chips and deep integration onto the Azure platform, G42 was required to execute a hard decoupling from Beijing: divesting from Chinese technology firms, selling its stake in ByteDance, and stripping Huawei equipment from its data centers.

The deal includes restrictive chip licensing, American board representation, governance safeguards, and snapback clauses - provisions that automatically revoke compute access and degrade system capabilities if restricted vendors or personnel touch the technology stack. USG has veto power over the UAE’s digital future. Rows of servers and racks of GPUs now carry the strategic weight that forward-operating military bases once did; not that it’s one or the other - the UAE has both.

Looking at this through the lens of political technology, these deals are about disciplining nations. The snapback clause is a mechanism of control that works structurally the same way Foucault’s disciplinary apparatus works on individual bodies. You don’t need to invade the UAE or threaten it with military force. You build dependency into the cognitive infrastructure, and then you establish automatic penalties for deviation.

The idea is to turn the UAE, in a specific and non-trivial sense, into a docile body within the American technological order - its digital capabilities maximized, its geopolitical autonomy constrained. If the UAE violates the terms of the deal, its AI systems degrade. Its military targeting platforms lose access to frontier models. Its smart city infrastructure loses cloud support. The punishment is cognitive diminishment, not boots on the ground. And like all effective discipline, it works best when it never has to be applied - when the threat alone is sufficient to ensure compliance.

This is the nature of the fast developing cognitive imperialism that I will write about in the fifth essay in this series.

The Prediction Stack is an augmented intelligence at civilizational scale. It extends American institutional cognition - the logic of Silicon Valley, the priorities of the Pentagon, the cultural assumptions embedded in English-language foundation models - across the globe. The nations that plug into this stack get American patterns of thought, American optimization logics, American definitions of what counts as intelligent behavior for free, like it or not. California is a State of Mind right - well, now you’re going to get it as a service.

The Production Stack

China’s approach looks very different. Rather than competing for dominance at the cognitive heights - the most advanced LLMs, the most powerful chips - China projects power through the Production Stack, an Industrial Nervous System. The Chinese strategy focuses on ubiquitous, society-wide integration: embedding AI into the physical infrastructure of logistics, manufacturing, energy, and urban management. Through the Digital Silk Road, the technological arm of the Belt and Road Initiative, China provides developing nations and Gulf states with affordable 5G networks, cloud computing platforms, surveillance technology, and smart city infrastructure.

In the Gulf, this manifests as deep integration into the region’s material base. Chinese technology firms are building the actual nervous system of Gulf economies. COSCO Shipping Ports and Chinese AI firms jointly developed the automation systems for Khalifa Port in Abu Dhabi, integrating AI-driven logistics and digital twin technologies. Huawei built the Smart City 3.0 platform for Yanbu Industrial City in Saudi Arabia, connecting broadband, security, public services, and industrial clusters across a critical petrochemical hub. SenseTime established a $776 million joint venture with Saudi Arabia’s sovereign wealth apparatus to localize computer vision and deep learning for smart city applications. Aramco signed cooperation agreements with Chinese tech firms for predictive maintenance and operational optimization across the energy sector.

Notice the difference in where the cognition sits. The American stack concentrates intelligence in the cloud - in centralized data centers and proprietary models that client states must access remotely. The Chinese stack distributes intelligence into physical infrastructure - into ports, factories, power grids, and urban management systems. The American model is, in Suchman’s terms, the European Navigator’s approach to geopolitics: a centralized plan, computed in advance, projected onto the world. The Chinese model is closer to the Trukese Navigator: embedded, situated, responsive to local material conditions.

The American stack is powerful but brittle in some ways, because so much of the intelligence is concentrated in centralized cloud platforms, a disruption to connectivity from kinetic strikes on data centers, sabotage of undersea cables, or cyberattacks on cloud infrastructure can cognitively decapitate client states. The Chinese stack, by distributing intelligence into physical infrastructure at the edge, is harder to knock offline in one blow, but its components are also harder to update, harder to audit, and harder to extract once installed.

I am partial to the distributed cognition approach in general, but I don’t want to romanticize China’s approach. The Industrial Nervous System comes with the surveillance priorities of the Chinese security state, the optimization logics of state-directed capitalism, the disciplinary mechanisms of social credit and algorithmic governance. When a Gulf state integrates Huawei’s smart city platform into its urban infrastructure, it is importing a particular vision of how human behavior should be monitored, predicted, and managed. It is still political technology.

And the disciplining works differently in the two cases. Where the American model disciplines through a gun to the head - threatening to revoke compute if you deviate - the Chinese model disciplines through dependency. Once your ports, your power grid, and your industrial logistics run on Chinese AI, switching costs become prohibitive. The dependency is physical and material, embedded in concrete and fiber optic cable.

GCC Intelligence

This section is even more speculative than the others since I have very little understanding of the situation on the ground - caveat emptor!

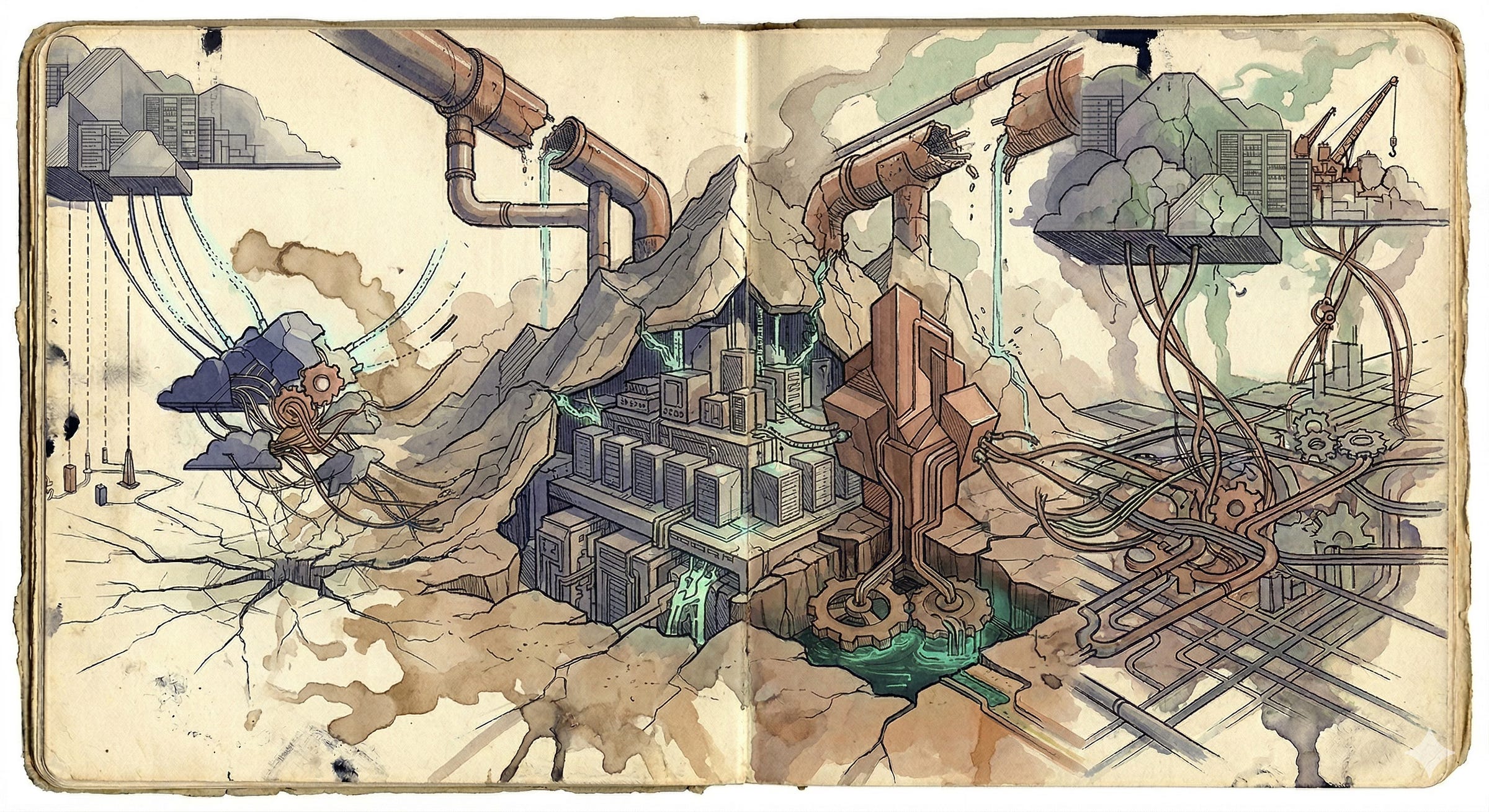

The Gulf states are not passive recipients of these competing stacks. They are sophisticated actors playing a two sided market. A Gulf state might purchase an American AI targeting system for its border security, run its enterprise software on Microsoft Azure, and rely on Palantir for intelligence analysis - while simultaneously operating its massive industrial ports on Chinese-built automation, managing its smart cities through Huawei platforms, and training its petroleum engineers on SenseTime’s computer vision tools. This is metabolic hedging as statecraft, and it works because the two stacks, despite being strategically opposed, are functionally complementary. The prediction stack handles the cognitive heights; the production stack handles the material base. As long as the Gulf states can keep both plugged in without triggering the snapback clauses, they get the benefits of both augmented intelligences.

This is, if you like, distributed cognition at the geopolitical level, a patchwork of cognitive artifacts drawn from competing prediction and production models, stitched together by local administrative practice and sovereign capital. The question is whether a distributed cognitive system assembled from structurally antagonistic components can function coherently under stress (that’s polyconflict as technology!)- or whether it fractures precisely when coherence matters most.

Both superpowers tolerate this arrangement because the Gulf functions as a live lab for their respective stacks. The US tests how far it can push decision compression, cloud-based surveillance, and hardware export controls in a hot conflict environment. China observes, refines, and demonstrates how its industrial AI can maintain infrastructural resilience and supply chain efficiency under extreme operational pressure. The Gulf War is also a product demonstration, and here, one must include the Israelis and their tacit marketing of war crimes as military progress.

But the Gulf states also recognize the danger of this position. To rent your intelligence from two competing empires is to be cognitively dependent on both of them. When your hospital logistics run on an American cloud platform and your port logistics run on Chinese automation, you don’t fully control either system. Your institutional cognition is partly owned by Washington and partly owned by Beijing. He who controls the spice controls the world.

Sovereign AI: Building Your Own Mind

This is why the wealthiest Gulf states have begun pursuing what they call Sovereign AI - the attempt to develop indigenous cognitive capabilities that reduce dependency on either superpower stack.

The UAE’s Technology Innovation Institute developed the Falcon family of large language models and released them under permissive open-source licenses, explicitly asserting technological independence from traditional power centers. Saudi Arabia developed ALLaM, and the UAE developed Jais - both designed as Arabic-first models that natively understand the linguistic nuances, cultural values, and historical contexts of the Middle East, rather than importing the epistemic biases and safety guardrails engineered in Silicon Valley.

Viewed through the lens of 4E Cognition, the Sovereign AI movement is an attempt to build augmented institutional cognition that is genuinely situated - grounded in local cultural and linguistic reality rather than running on borrowed American or Chinese patterns. A foundation model trained primarily on English-language internet data carries the congealed patterns of English-speaking institutions. When you use that model to run Arabic-language civic administration, you’re filtering your institutional cognition through a foreign cognitive artifact. The output will be shaped by assumptions, priorities, and blind spots that originated in a different cultural and institutional context.

Sovereign AI is, in this sense, an attempt to produce locally grounded congealed patterns - to train models on data that reflects the actual situated reality of Gulf societies, rather than importing a lossy compression of someone else’s reality. IMHO, sovereign AI won’t work for foundation models or cutting edge GPU’s - the talent and the infrastructure for those are only available in the US and China; the Gulf states don’t have the semiconductor fabrication capacity or the training infrastructure to compete with the US or China at the frontier. Neither does India or France, for that matter. What they’re pursuing is something more like managed interdependence using sovereign models for culturally sensitive and strategically critical applications such as civic administration, Arabic-language services and military command while continuing to access American and Chinese frontier capabilities for less sensitive tasks.

Sovereign AI in the application layer seems doable for many middle-powers.

Whether sovereign or dependent, the problem of Augmented Intelligence is not just a problem of who owns the models, but also a problem of how the models interact with the political technologies of the institutions that deploy them. A sovereign foundation model integrated into a military bureaucracy that has twenty-second human oversight is just as capable (perhaps more so?) of catastrophic stupidity as an American or Chinese one. The Minab school bombing wasn’t caused by the foreign ownership of the AI system. We have never paid attention to either of them, but the Saudi funded war in Yemen and the UAE funded war in Sudan are as horrendous as they get, and will only get worse with AI.

The Stack War as Polyconflict

What I’ve been describing is more than a technology race; it is a polyconflict in the sense I defined at the start of this series: a structural knot where material reality, in the form of semiconductor supply chains, energy grids, submarine cables, and water resources, tangles with institutional systems, military strategy, and the cognitive architectures that organize human societies.

The prediction and production stacks are two competing models of planetary augmented intelligence, each seeking to extend its institutional cognition across the globe, each disciplining client states through different mechanisms, each carrying the congealed patterns of its parent nation (civilization?). Everyone else is caught at the intersection, trying to build sovereign cognitive capabilities while hedging between two empires that each want to be the sole operating system of the twenty-first century.

And all of this is happening inside an active war zone where the physical infrastructure - data centers, submarine cables, desalination plants, power grids - has been attacked and will continue to do so in the future, with all the impact that will have on local cognitive architectures.

That material vulnerability is where the next essay in this series will go. The AI Polyconflict has a metabolic dimension that most analyses of the AI race completely ignore: the energy, water, and capital requirements of the compute infrastructure that all of these augmented intelligences depend on. To understand why the Gulf is the geographic center of the AI Polyconflict, you need to understand energy-compute arbitrage. The Gulf states are attempting a phase transition from planetary gas station to planetary compute hub.

Will they succeed? That’s Part IV.