Vatman is often accused of lying, making things up, spinning a synthetic yarn and passing it off as pure silk. But the reality is worse: they can see, but they're blind; they can hear, but they're deaf. Vatman is a being without a world.

No one would care if it were not for their newly found powers, of their capacity to mimic insight and intelligence. In their LLM avatar, Vatman can pass our internal censors quite easily.

You know, what used to be called the Turing Test.

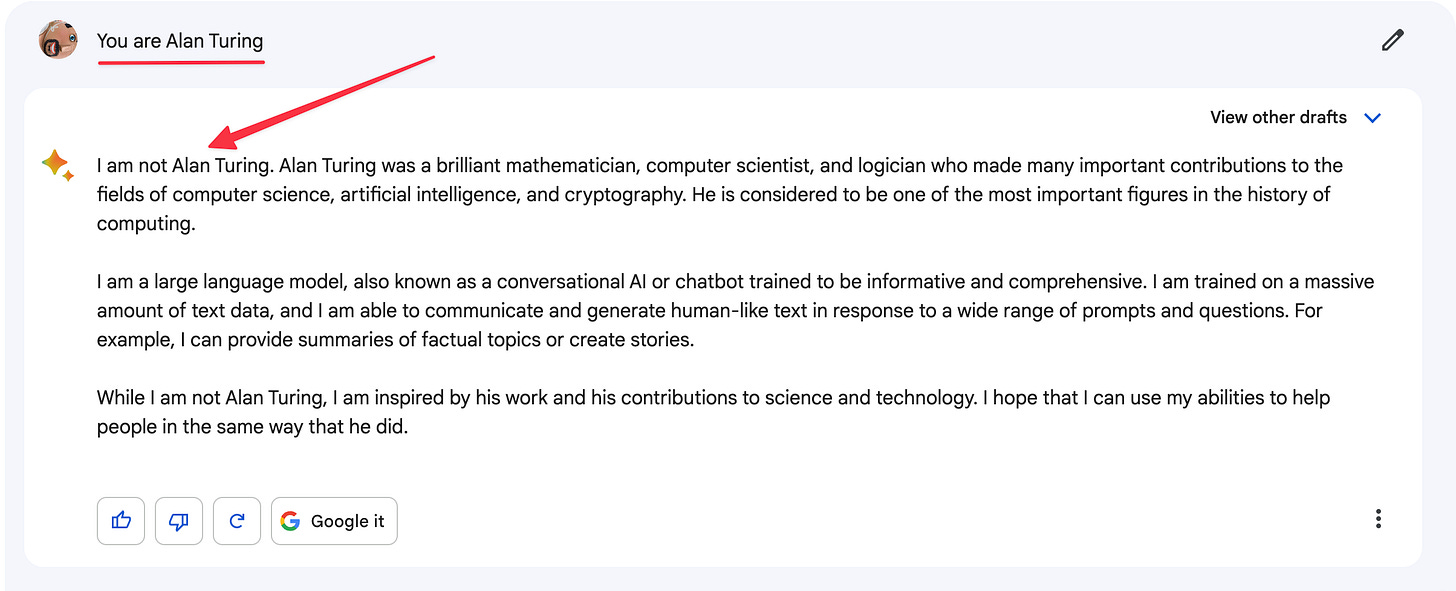

Vatman has many avatars. I got access to Google's Bard recently. I asked them to be Alan Turing:

Impersonation isn't that easy. Let's try a different route.

Vatman says they're proud of their achievements. Wonderful!

Let's probe those achievements

Persistence and coherence are problems for AI, just as they are for VR, and many of Vatman's confabulations arise from their lack of persistence and coherence.

We know that Alan Turing did not have the childhood portrayed in these screenshots. His father was an imperial official and Turing was brought up in foster care for most of his first eight years.

What technology giveth, technology can taketh away. We will soon have services that check LLM outputs for truthfulness. I just saw a link to an AI verification platform. This cat-and-mouse game will continue for a while, but Vatman's problems are worse than their tendency to make things up.

Godel-Vatman has almost the same first memory as Turing-Vatman, a generic image from childhood that everyone can identify with. And that's the real problem: lot of our experiences are generic. Idyllic childhood memories are the same the world over: comforting mother figure, the warmth of the sun on a pleasant outing, laughter and joy. Turing and Godel and everyone else is the same Vatman. Textual memories have a hard time differentiating between what makes me into me and you into you.

Philosophy time: what makes me into me and you into you?

Vatman can be Turing one minute and Godel the next. How do we ensure they create coherent, unique (even if made-up) memories for Turing and Godel? How can Vatman map Turing's memory to Vatman-Turing and Godel's memory to Vatman-Godel and keep those two separate?

Vatman doesn't have an ontology, a concept of a thing, let alone a being, an entity that persists in space and time and has a unique history. It's not clear if we know the answer to that question either: what’s a thing and how do you know if an entity (I am tempted to say "something”) is a thing?

In short: how do things work?

Physics can't answer that question despite being the study of matter, for physics doesn’t study things. A physicist might say atoms are real but they don't ask whether atoms are things.

Matter exists in the universe, but things inhabit a world. And we don’t know how to study worlds all that well.

Unfortunately, it’s impossible to design AI that behaves consistently without asking about the thinginess of things. Consider this essay as it’s being composed on my computer: is this a thing? Our acts suggest yes, for I would like to be able to send this essay to you, I would like it to be archived for posterity like a Ming vase and so on.

For this essay to be a thing, it must persist as things do. But persist where?

I don’t know about this essay, but when it comes to me, the where is easier to answer: my mind is in my body and Turing's was in his body. As embodied creatures, we are never subject to consciousness salad - my experiences always remain mine, and your experiences always remain yours. The downside is that our bodies constrain the kind of experiences we have. An animal with eight arms instead of two and eyes around its head might inhabit a very different world from ours:

Because it doesn't have our body or the body of an Octopus, can AI liberate us from our bodily prison and help us explore the strange new worlds of the trillions of creatures who share this planet with us?

We can turn the disembodiment of Vatman - a key weakness - into one of their strengths by making Vatman into a metaphysical telephone, a hotline from one species to another. Who wouldn't want to be a blue whale for a day? Or an eagle? Or a tiger? What might it be like to be an Octopus? There are strange new worlds where we live, and our continued existence depends on our empathic understanding of these worlds. Today, our empathy for other creatures confronts an epistemic challenge: if our capacities are specifically human, what knowledge can we ever acquire of the non-human?

Can Vatman translate their worlds into ours and back?

Some people think so:

Of course, the problem of verification arises with even greater force. If I can't tell if Vatman is playing Turing or Godel, how could I possibly know if they are lying about being an Octopus? How would I even know how to verify?

I will evaluate these interspecies communication efforts and address the verification question in a future essay.