The State of Algorithmic Politics

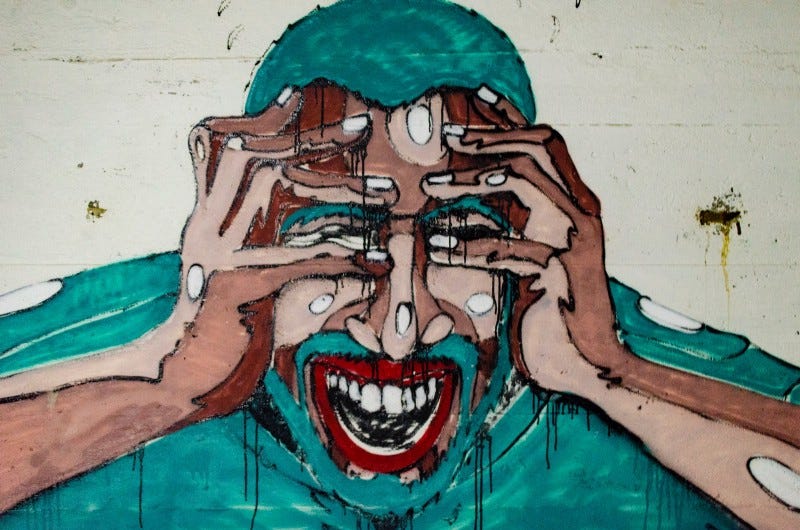

Emotional Truths

Why is it that at a time when the future of human existence is threatened by climate change, the future of work is threatened by automation and the future of every other living being is threatened by humans, why is it that we are increasingly electing regimes guaranteed to destroy life as we know it?

That question haunts me everyday.

There’s a frightening answer: that without careful design and collective struggle, our default state might be to increase authoritarianism, clamp down on dissent and erect new borders while strengthening existing ones. That technology, which was supposed to make our lives better, is making it worse.

I have no doubt that technology plays a big role in making the authoritarian camp stronger; the romantic in me thinks it will also play a big role in imagining a better future, but the current moment belongs to those fighting for their share of a shrinking pie. One way they’re able to take more than their fair share is by drawing our attention away from where it needs to be, shifting our gaze towards powerless victims instead of tackling the problems created by the powerful.

Nevertheless, the authoritarians get it right in one respect: they articulate a world in crisis better than anyone else; their atmosphere of fear is more believable than the liberal intelligentsia’s vague pronouncements of universal humanity. It’s only when that fear congeals in the form of immigrants and traitors rather than corporations and the 1% that a falsehood is perpetrated. Whatever its problems with facts and reason, the right wing understands emotion better than progressives.

Not all progressives though — the school kids who are on strike saying “You will die of old age, we will die of climate change” are getting the emotional register exactly right, which is why their movement is spreading without having any money or power or central leadership. Unfortunately, having money and power makes it easier to spread your emotional register; recent events in India being a good case in point.

Photographer: Aarón Blanco Tejedor | Source: Unsplash

Algorithmic Politics in Kashmir

If you’re from my part of the world, you know that the Modi regime has changed the equation between the state of Jammu and Kashmir and the central government. I don’t have anything original to say about the politics of the event — read Srinath’s piece if you want a deeply informed overview — but instead, I want to direct your attention at how the event was managed.

The press has been talking about how the announcement was preceded by the cancelling of the Amarnath yatra, airlifting thousands of soldiers and the house arrest of the entire Kashmiri political class. All true, but they miss an important element whose consequences might be even longer term — the entire internet was shut down in Kashmir and remains so. You can’t Whatsapp your friends, you can’t send them videos on Tik-Tok or Snapchat, you can’t use messaging to organize protests or rallies.

In case you didn’t know it, India leads the world in temporary shut-downs of the internet. From local bureaucrats to the Home minister, government officials cite public security as a reason to suspend what’s become the normal mode of communication for most Indians. Since the medium is the message, the politics of free speech is the politics of the internet. The shut down of whatsapp, however temporary, is how the government controls people’s minds.

Moreover, the shut down is temporary by design.

Attention being the scarcest resource today, the way to control our minds is done by controlling our attention, whether by making us focus where businesses and governments want us to (let’s call those white holes) or by creating black holes of information where they would rather we didn’t look. There’s absolutely no advantage in making that black hole permanent because attention is fickle and it keeps shifting from one spectacle to the next. Smart governments and businesses are constantly creating and destroying white holes and black holes. From managing expectations about jobs to creating new images about anti-nationals, every modern state is in the business of constant focusing and refocusing of our attentions. Incidentally, the Chinese version of attention management during crises is subtler than the Indian version — instead of shutting down the internet, they have hundreds of thousands of people whose only job is to deflect attention away from the crisis by flooding social media and bulletin boards with innocuous posts.

The decision to shut down the internet in any district or state is an impromptu decision by some official who is handling many different pressures. Which is why I am skeptical of conspiracy based causal explanations: that there’s a hyper-intelligent cabal of scheming businessmen and politicians who are directing our minds as they see fit. Instead, I am more likely to believe that the rapid shifts of collective attention are systemic properties that can’t be ascribed to individual manipulators. The human visual system saccades every 300 milliseconds without any underlying motive or purpose. The winners at algorithmic politics are those who understand the inherently complex nature of the underlying system, just as the control systems in our brains that direct intentional visual search are built upon a layer of random saccadic movements.

In hindsight, it’s clear that print and broadcast media — newspapers, radio, TV etc — created new forms of democratic politics as well as new forms of authoritarianism. Why would it be any different with algorithmic media? Of course we are going to see new forms of politics — both the Arab spring and the Kashmir crisis are political responses to a new technological condition.

Question: Is resistance futile?

Answer: yes unless the treehuggers figure out how to capture and manage attention as well the treecutters, and in order to do so, they have to grasp how the attention economy differs from ideology and propaganda.

https://www.flickr.com/photos/jamesseattle/32204594560/

Attention Management

Now I come to the central point of this essay: the algorithmic management of attention is substantially different from what we used to call propaganda, just as paying money to Google to rank highly on certain keywords is substantially different from launching a traditional print ad campaign. Yes, both are forms of advertising but there’s a world of difference in how the ads are placed in front of a customer and what the customer does with the ad when they are attracted to its message. Similarly, political advertising is also much more targeted today. Propaganda identifies a uniform, faceless threat. It’s the Jew, the communist, the Muslim. In contrast, the ideal algorithmic violence is personalized, localized and context dependent.

It’s about identifying a specific yet random individual who carries an unwanted identity. Specific in that it’s a particular black or Muslim or LGBT person who happens to be in your vicinity. Random in that the perpetrators of violence couldn’t care less about that person’s individuality as long as they belong to a certain target identity. Specific yet random is the logic of “personalized” attention in the age of machine learning. When someone says personalized medicine is coming, they don’t mean that doctors will learn who you are as an individual and prescribe medicines accordingly. Instead, they will use patterns of genetic data, dietary habits and life history to prescribe medicines. That personalization will work reasonably well for another person whose genetic patterns are close enough to yours.

Similarly when Google shows ads based on your browsing history, it uses your statistical footprint as the input to its predictive engine, without caring whether you are a real person or a robot. The statistical person is often a reasonable proxy to a living, breathing individual but important principles are lost in translation.

Specific randomness is the underlying model of the gig economy. When I order a cab on Ola or Uber, I am getting a specific driver, an actual human being who sits behind the wheel. At the same time, I don’t care much about him besides the fact that he’s a qualified and licensed driver and that the car is reasonably neat and functional. He can be replaced by another person without any loss of customer experience. To the extent that the gig economy is the future of employment, specific randomness tells us where jobs are going until they are all replaced by robots.

In any case, the widespread availability of the specific randomness is impacting politics as much as business. That’s one reason why we are seeing new forms of political violence emerge as a result of algorithmic media — in India, we see it in the eclipse of the riot and the emergence of lynching as the chief instrument of street violence. In the US you’re seeing increasing numbers of mass shootings. In both cases, it’s as if a machine learning algorithm infected the brain of a lynch mob or a gun toting avenger and turned his mind to violence. In propaganda there’s a strong connection between the official party line and the violence on the street. Intellectuals were murdered during the cultural revolution because Mao said so. In contrast, there’s a tenuous link — if any — between the pronouncements of Trump and the shooter in the street.

We don’t know how deep learning algorithms identify the features that make them good at identifying cats in videos. As Judea Pearl keeps saying, causality is a hard problem for the AI that drives machine learning. I believe that understanding the causes of spontaneous violence is an equally hard problem for algorithmic politics. For the same reasons. And it’s obviously more important to understand the emotional causes of algorithmic politics than the causal structure of cat videos.

Google doesn’t care whether they understand the causality behind their models as they are predictive. Every once in a while their algorithms will make obvious mistakes or contribute to racial profiling but that’s the price of doing business. In contrast, progressive politics of any kind will have to care about real people (or real animals if you’re an animal rights person like me) and therefore, questions of causality are crucial.

Let me end this essay with a provocative possibility: that the future of politics isn’t between left and right, but between predictors and explainers. Predictors use data to drive people’s emotions in the direction they want without care about who is hurt and how. Their target is the specific yet random person. Predictive politics is the political equivalent of Google’s ad words. In contrast, explainers care about the actual people behind their statistical signatures. Progressive politics should privilege explanations over predictions. It’s harder in every sense of that term.