Preface

Over the course of this series, I have traced the AI Polyconflict through four interlocking threads. Part I examined the compression of the kill chain and the cheap drone paradox. Part II built the theoretical foundation: Augmented Intelligence as the merger of distributed cognition and political technology, militaries as artificial intelligences, and the new cyborg as fearsome and structurally stupid. Part III mapped the Stack War between the American Prediction Stack and the Chinese Production Stack, with the Gulf states hedging between them. Part IV revealed the metabolic dimension of both AI Stacks - the energy, water, and capital that the compute infrastructure demands.

In this last and most speculative essay of this series, I want to weave these four threads into one cloth.

The Highest Stage of the Algorithm

In 1902, the British economist J.A. Hobson published a study of imperialism that identified its root cause as domestic inequality. The working classes could not afford to consume what they produced. Capital accumulated at the top with no profitable domestic outlet. This excess capital had to go somewhere, so it went abroad - seeking new markets, raw materials, and investment opportunities in territories that could be politically controlled. Territorial imperialism was the military and political expression of an economic problem that could have been solved at home through redistribution, but wasn’t.

Lenin sharpened Hobson’s analysis in 1916 with Imperialism, the Highest Stage of Capitalism. For Lenin, the key transition was from the export of manufactured goods to the export of finance capital. Banks, cartels, and monopolies no longer needed to sell things to foreign markets. They needed to invest in them - to export capital itself, which would then generate returns through the control of foreign productive capacity. This was imperialism’s mature form: not armies conquering territory for its own sake, but finance capital dividing and redividing the world among monopolies, with armies following to enforce the arrangement.

I want to suggest that the global economy is now entering the highest stage of the algorithm: the transition from the export of finance capital to the export of inference capital (which is tied to a longer analysis of how inference might replace money one day, but that would take us too far afield). The pattern is similar to what Hobson and Lenin described, but the commodity being exported is different. It is not cotton, or steel, or even money. It is cognition, or if you prefer, intelligence.

Artificial Intelligence functions as the ultimate absorber of excess capital. At a time of staggering global inequality, hyperscalers and tech monopolies are pouring hundreds of billions into AI infrastructure such as chips, energy, real estate, cooling systems while material human needs go unmet across most of the globe. Unlike physical railroads or oil wells, the raw material of AI - data and compute - is infinitely scalable in principle, which also means AI is a bottomless sink for stagnant wealth, which makes it the most attractive investment frontier since colonial real estate.

But capital expenditure of this magnitude must yield a return. The technology must find new markets to monetize, and in the process uncover more data that feeds into the next stage of expansion. The two competing stacks are establishing a system in which every nation on earth, from Nigeria to India to the Gulf states, is encouraged (required?) to rent its intelligence through cloud platforms owned by a handful of corporations in California and, on the other side, to embed its physical infrastructure with AI systems manufactured in Shenzhen.

Macaulay’s Mirror

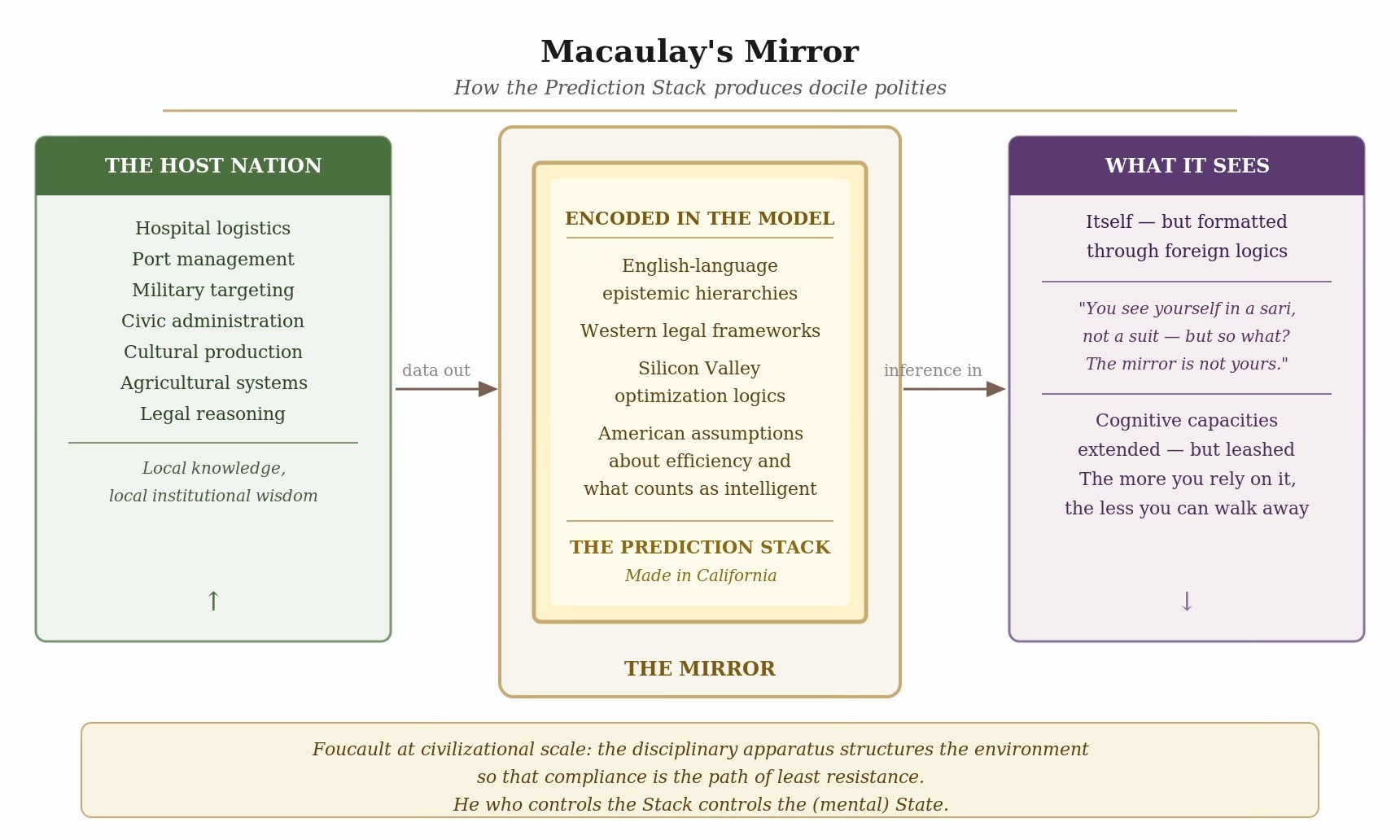

This is where the framework I built in Part II becomes essential. Foucault’s political technology was originally a theory of how institutions produce docile bodies - individuals whose physical capacities are maximized while their political autonomy is minimized. The drill sergeant formats soldiers. The prison formats inmates. The school formats students. In each case, the disciplinary apparatus works by structuring the environment so that compliance becomes the path of least resistance.

The Prediction Stack - the American AI architecture I described in Part III - is a political technology of this kind, but operating at civilizational scale. It produces docile bodies within docile polities. BTW, we have already seen that with social media - disciplining at population scale is political reality across the world. When a nation’s hospital logistics run on a proprietary model owned by a Silicon Valley corporation, when its port management relies on cloud infrastructure controlled from Virginia, when its military targeting depends on algorithms licensed from American defense contractors, and when its civic administration operates through platforms whose optimization logics were designed for a different culture in a different language - that nation’s institutional cognition has been formatted by an external disciplinary apparatus. Its cognitive capacities have been extended and it can do things it could not do before, so the leash is long. But it’s there.

Classical imperialism extracted raw materials like rubber, cotton, minerals. Cognitive imperialism extracts data: the accumulated behavioral, cultural, and institutional output of entire societies, compressed into training sets that feed the next generation of foundation models. One fear is that the patterns congealed in those models encode the priorities of the extractor - English-language epistemic hierarchies, Western legal frameworks, Silicon Valley optimization logics, American cultural assumptions about what counts as intelligent, efficient, or normal. When a nation in the Global South operates its government through these models, it runs its institutional cognition through a foreign cognitive artifact. There’s legitimate fear that its own knowledge - its local institutional wisdom, its cultural context, its situated ways of understanding - is systematically underweighted in the statistical distribution.

But the greater fear is that the prediction provider is even more firmly in charge when the localize their prediction engine for your geography; you will see yourself in a mirror made in California, and be happy that you are seeing yourself in a sari, not a suit, but so what? The mirror is not yours, and the more you get used to seeing yourself in it, the less you can walk away from it. You do not need to occupy a nation’s territory and extract its minerals. You occupy its cognitive infrastructure - the tools, the platforms, the models, the optimization logics through which its institutions think - and the territory follows.

In 1835, when British power in India was ascendant and they were confident about the superiority of their race and their civilization, Thomas Babington Macaulay wrote his Minute on Indian Education, where he says:

I have never found one among them who could deny that a single shelf of a good European library was worth the whole native literature of India and Arabia. The intrinsic superiority of the Western literature is, indeed, fully admitted by those members of the Committee who support the Oriental plan of education....We have to educate a people who cannot at present be educated by means of their mother-tongue. We must teach them some foreign language. The claims of our own language it is hardly necessary to recapitulate. It stands pre-eminent even among the languages of the west....We must at present do our best to form a class who may be interpreters between us and the millions whom we govern; a class of persons, Indian in blood and colour, but English in taste, in opinions, in morals, and in intellect.

Macaulay was more successful than he thought, but his successes will be nothing compared to what AI will usher. He who controls the Stack controls the (mental) State.

Compute Stations and the Comprador Class

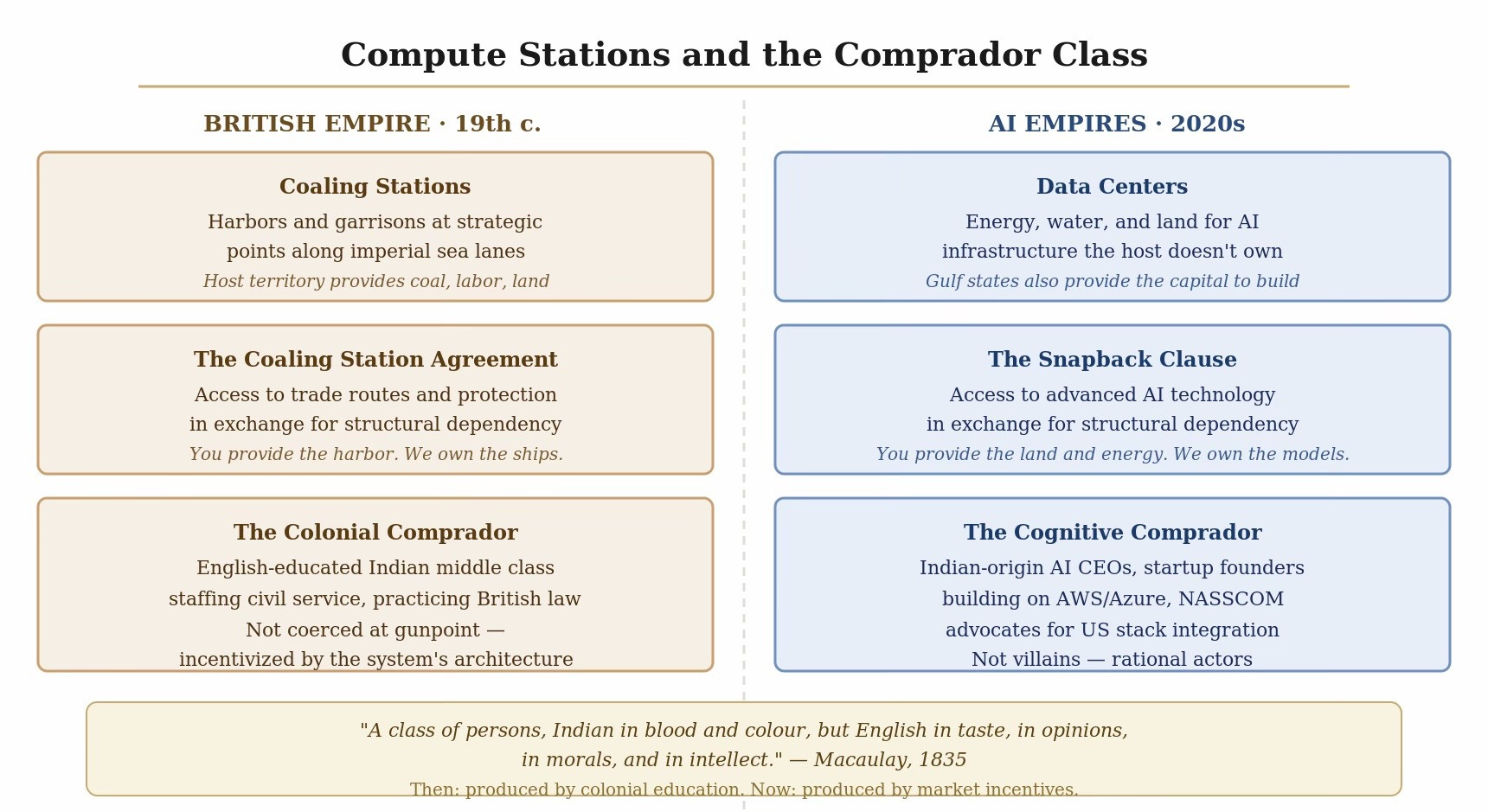

The British Empire required physical coaling stations across the globe to project naval power. Ships needed to refuel, so Britain secured harbors, built infrastructure, and stationed garrisons at strategic points along its sea lanes. The host territories provided the coal, the labor, and the land. They did not own the ships.

The AI empires of the 2020s require compute stations. Data centers need energy, water, and land. The host nations provide these metabolic inputs - and, in the Gulf’s case, the capital to build the facilities — to sustain an intelligence they do not fully own, control, or understand. The snapback clauses and governance safeguards are the twenty-first-century version of the coaling station agreement: access to advanced technology in exchange for structural dependency.

The comprador class follows from this arrangement. In the history of European imperialism, the comprador class consisted of local agents who facilitated resource extraction on behalf of foreign empires. They were intermediaries - materially rewarded, culturally shaped by the imperial center, structurally necessary for the system to function. What Macaulay called ‘a class of persons, Indian in blood and colour, but English in taste, in opinions, in morals, and in intellect.’ The British Raj in India ran not on British administrators alone but on an English-educated Indian middle class that staffed the civil service, practiced British law, and managed the extraction of revenue from the countryside. They were not coerced at gunpoint. They were incentivized by the architecture of the system.

Cognitive imperialism will produce its own comprador class: the local elites, technologists, and policymakers who facilitate the integration of foreign cognitive infrastructure into their societies. They are not villains; they are rational actors responding to incentives.

India makes this visible at scale. India’s entire technology services economy - the Infosys-TCS-Wipro model - was built on cognitive labor extraction: the body shop. Indian engineers processed information for Western corporations at low cost, providing execution capacity without intellectual ownership. This was already cognitive semi-colonialism. The next stage is worse. AI now replaces the Indian IT worker while the AI itself remains owned by the same Western corporations. The software engineer in California who is replaced by AI has some political recourse, but when Oracle fires 12000 engineers in Hyderabad in one day, to whom can they appeal?

The Indian comprador is doing very well for themselves, thank you. Indian-origin CEOs of American AI companies - Sundar Pichai at Google, Satya Nadella at Microsoft - are smart individuals making rational decisions for themselves. I might do the same too. Indian startup founders are building thin application layers on top of AWS and Azure. The NASSCOM policy establishment has consistently advocated for India’s integration into the American tech stack. Like the pharmaceutical company CEO choosing to outsource APIs to China, we could be heading into a future where we are mere assemblers of AI systems, unprepared for a foundation model supply shock.

I don’t want to oversell the doom. India has mounted a more serious resistance than most. Aadhaar, UPI, ONDC - India has built genuinely impressive public digital infrastructure that is interoperable, open, and domestically owned. The IndiaAI Mission, Sarvam AI, etc - there are real attempts to build Indian-language models grounded in local linguistic and cultural reality. However, India does not have the semiconductor fabrication capacity, the training infrastructure, or the energy leverage to compete at the top of the stack. It is attempting managed interdependence, the same strategy as the Gulf states, but without the Gulf’s metabolic bargaining power.

The Great Enclosure

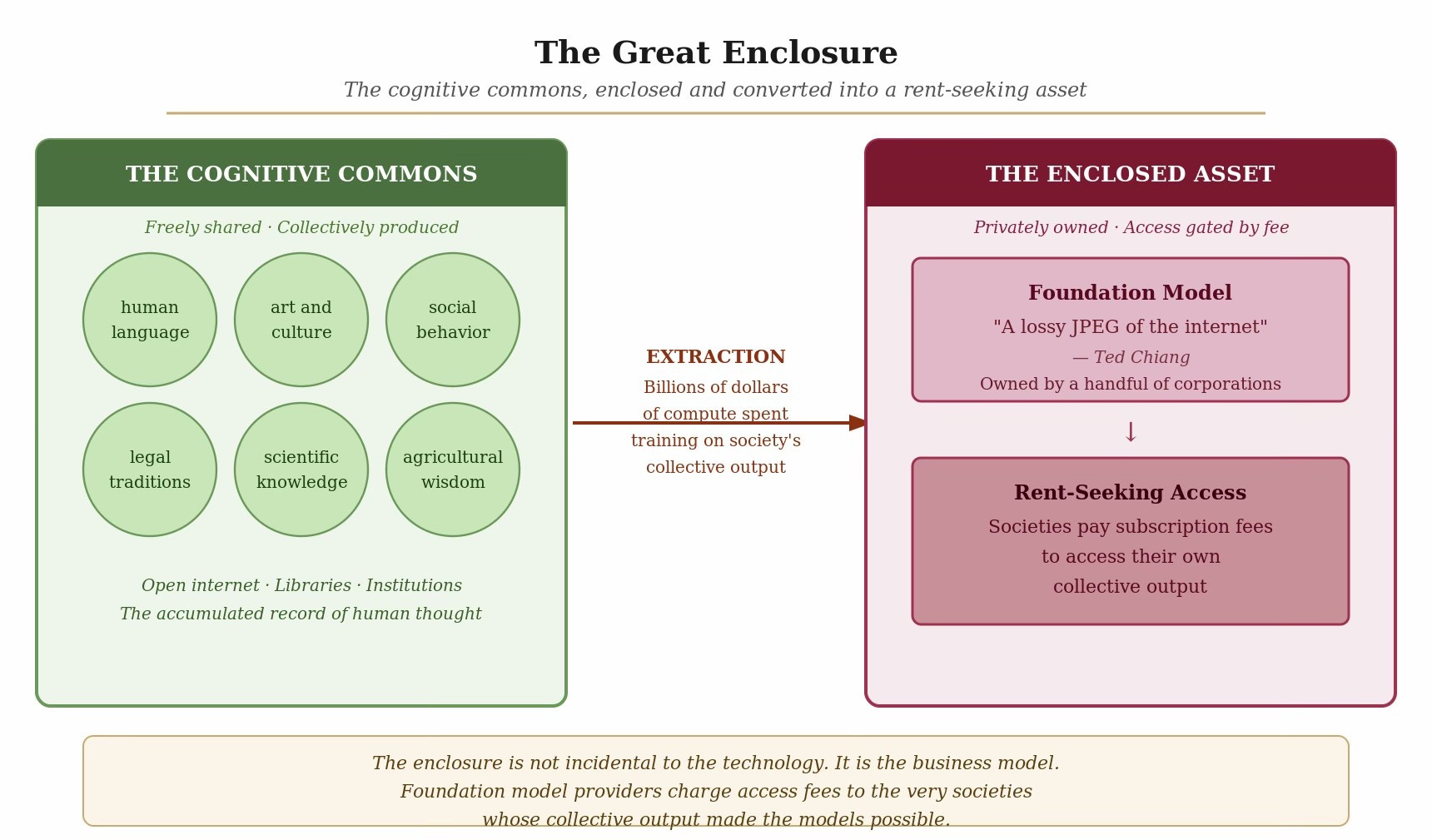

The AI Polyconflict potentially represents a Great Enclosure of the human mind. Between the fifteenth and nineteenth centuries, the enclosure of the English commons forced subsistence farmers off shared land and into wage labor, creating the proletariat that powered the Industrial Revolution. Land was privatized - transferred from a commons that sustained a community into a productive asset that generated returns for its owners.

Something similar is happening now. The collective intelligence of humanity - our aggregated data, our art, our social interactions, our institutional knowledge, our agricultural wisdom, our legal traditions, our languages - has been enclosed, extracted, and refined into a rent-seeking asset (if they can make any money!) controlled by a small number of corporations. Large language models are, as Ted Chiang observed, lossy JPEGs of the internet.

The enclosure is not incidental to the technology. It is the business model. Foundation model providers spend billions training on the cognitive commons - the open internet, digitized libraries, public datasets, the accumulated record of human thought and expression - and then charge access fees to the very societies whose collective output made the models possible.

Public Intelligence

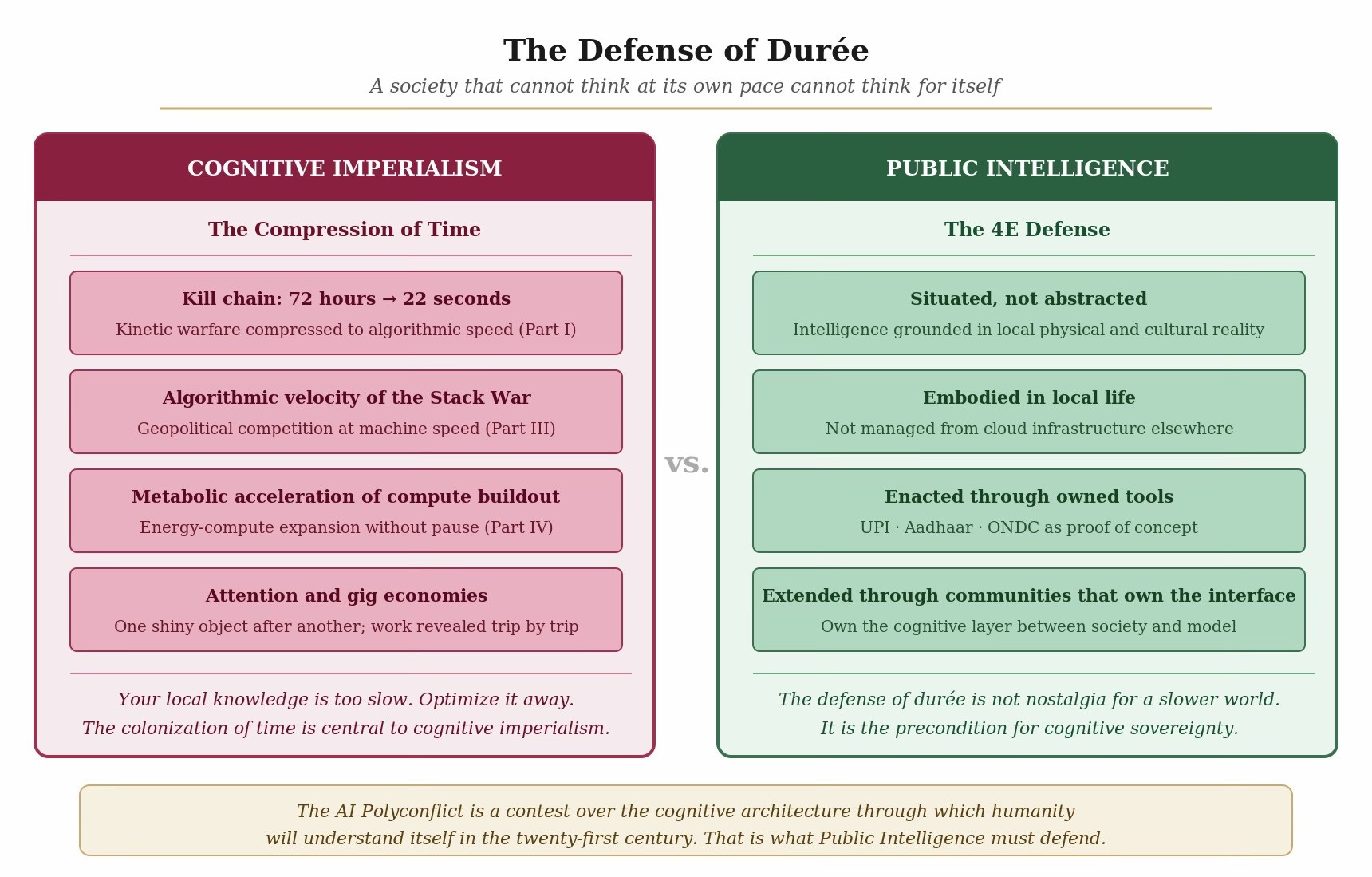

If the AI Polyconflict is a Great Enclosure, then the defense against it must be a form of Public Intelligence, and it needs to have teeth, not just good intentions. Public Intelligence means cognitive architecture that is genuinely situated rather than abstracted into cloud services owned elsewhere. It means intelligence that is embodied in local physical and cultural reality, embedded in local institutional context, enacted through local practice and knowledge production, and extended through tools and artifacts that communities actually own and control.

A realistic public intelligence for now - no nation can build frontier foundation models without access to the global semiconductor supply chain, and pretending otherwise is fantasy. But the application layer - the layer where AI actually touches governance, agriculture, healthcare, education, legal reasoning, cultural production - is defensible. India’s UPI is proof of concept: a public digital infrastructure that is interoperable, open, and domestically governed, even though it runs on hardware manufactured elsewhere. The question is whether this model can be extended to the AI layer - whether nations can own the cognitive interface between their societies and the frontier models, rather than ceding that interface to the model providers.

Across this entire series, from the twenty-second kill-chain confirmation in Part I to the algorithmic velocity of the Stack War in Part III to the metabolic acceleration of the compute buildout in Part IV, the consistent pattern has been the compression of human deliberative time. Cognitive imperialism colonizes space and time at once. The human durée - the lived time of ethical deliberation, cultural reflection, democratic consensus - is treated as inefficiency by the algorithm. Your local knowledge is too slow. Optimize it away. Compression of time goes hand in hand with its fragmentation; we have seen that already with the attention and gig economies, where the feed distracts us toward one shiny object after another, and the Uber or Swiggy driver whose workday is revealed only one trip at a time.

The colonization of time is central to cognitive imperialism.

The defense of durée is not nostalgia for a slower world. It is the precondition for cognitive sovereignty. A society that cannot think at its own pace cannot think for itself.

Closing

IMHO, the AI Polyconflict is a contest over the cognitive architecture through which humanity will understand itself in the twenty-first century.

The five threads of this series - the industrialization of lethality, the planetary cyborg, the Stack War, the metabolic loop, and the Great Enclosure - are facets of a single transformation. Human societies are being reorganized around augmented cognitive systems of unprecedented capability combined with stupidity, systems that carry the congealed patterns of their creators and impose those patterns on everyone they touch. The empires that control these systems - the prediction and production empires - with their different architectures but identical ambitions - are engaged in the most consequential division of the world since the Cold War. That division will pass though - sometimes fractally so - the Gulf, South Asia, Africa, and Latin America, whose capacity for self-governance is at stake, and that is what Public Intelligence must defend.