Preface

In Part I of this series, I traced how the Gulf War is exposing the AI Polyconflict through two concrete developments: the compression of the kill chain (where AI systems like Lavender and Project Maven are replacing human ethical deliberation with algorithmic speed) and the Cheap Drone Paradox (where $500 quadcopters are credibly threatening billion-dollar weapons platforms). I ended with a question that I want to pick up here:

how is it that cheap drones with embedded spatial intelligence are defeating legacy military systems that cost thousands of times more?

To answer that question, and to understand the AI Polyconflict at a deeper level, I need to step back from the Gulf and take a theoretical detour. Bear with me: this detour will pay off, because it changed how I see this conflict, and therefore, it changes how you see everything that follows in this series.

My claim is this: to understand what AI is doing in the world right now, you need two ideas that most people working on AI or people who are solving the world’s problems don’t care about. The first comes from cognitive science: distributed cognition. The second comes from a certain strand of philosophy: political technology. When you put them together, you get a framework for understanding Augmented Intelligence - the actual thing we should be concerned about, as opposed to the science-fiction version of AI that dominates most public debate on AI doomerism.

Militaries Have Always Been Artificial Intelligences

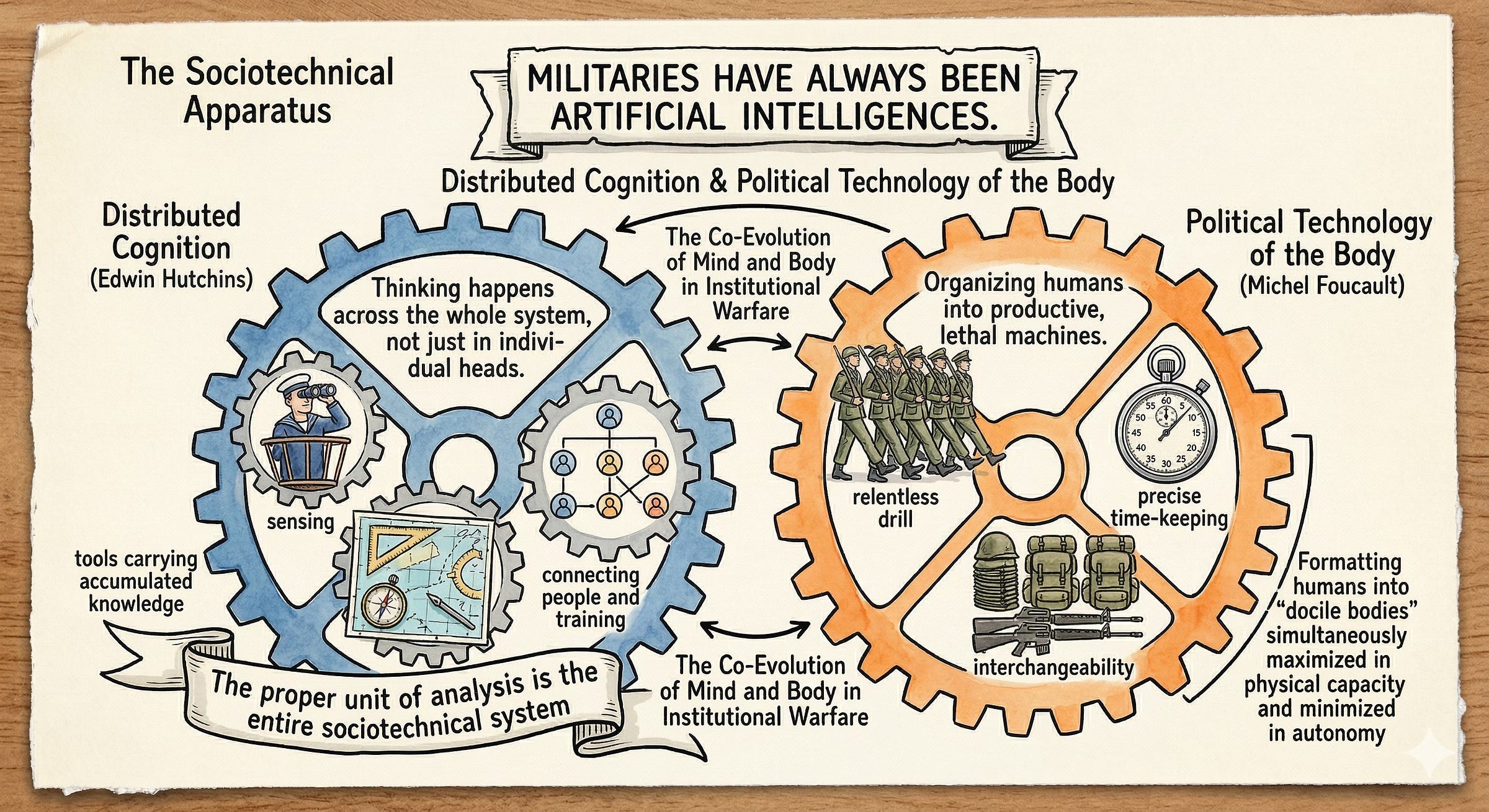

Let me start with distributed cognition. In the early 1990s, the cognitive scientist Edwin Hutchins published a book called Cognition in the Wild. Hutchins had spent years studying how the crew of a U.S. Navy ship navigates into port, and what he found upended a basic assumption of cognitive science: that thinking happens inside individual heads.

It doesn’t, or at least, not only there. When a Navy crew brings a warship into San Diego harbor, the thinking required to do so is spread across the entire bridge team and their instruments. One sailor takes a bearing through an alidade, another plots it on a chart, a third relays headings to the helm. The navigational tools themselves such as the charts, the compasses, the plotting instruments, carry centuries of accumulated mathematical knowledge built directly into their physical design. A sailor using a scale doesn’t need to know trigonometry; the tool does the trigonometry for him. The intelligence of the operation doesn’t live in any one person’s brain. It lives in the system: the people, their training, their tools, and the social organization that connects them all.

Hutchins’ work was part of a broader movement in cognitive science now called 4E Cognition - the idea that human thinking is Embodied (grounded in the body), Embedded (situated in an environment), Enacted (emerging through action), and Extended (relying on tools and artifacts outside the skull). For Hutchins, the right unit of analysis for understanding cognition isn’t the individual mind. It’s the whole sociotechnical system.

Now the second idea. In Discipline and Punish, the philosopher Michel Foucault traced how modern institutions - prisons, hospitals, schools, and above all, militaries - developed specific techniques for reshaping human bodies and minds into standardized, controllable components. He called this a political technology of the body. The key innovation of modern military discipline, starting in the seventeenth century with tacticians like Maurice of Nassau, was to take the chaotic mass of infantry and reorganize it through relentless drill, precise time-keeping, and rigid spatial ordering. Every movement was standardized. Every soldier became interchangeable. The military camp became a machine for producing what Foucault called docile bodies - people whose physical capacities were simultaneously maximized and whose political autonomy was minimized.

Foucault’s point was that the military didn’t just use technology. The military was a technology - an artificial apparatus for organizing human beings into a productive, lethal machine. Long before anyone built a computer, the army was already a kind of computational system, processing information about terrain, logistics, and enemy positions through hierarchies of disciplined human processors.

As I keep saying: we have always been artificial.

Augmented Intelligence

Here’s where these two ideas converge in a way that I think changes how we understand AI.

If Foucault argues that militaries are artificial systems - political technologies that format human beings into standardized components - and Hutchins shows us that cognition in complex institutions is distributed across people, tools, and organizational structures, then juxtaposing their insights leads to the inevitable conclusion: militaries have always been artificial intelligences (also see “AI is a cultural technology”). They are vast, distributed cognitive systems composed of disciplined human elements, material artifacts, and institutional procedures, all organized to process information and produce lethal outputs.

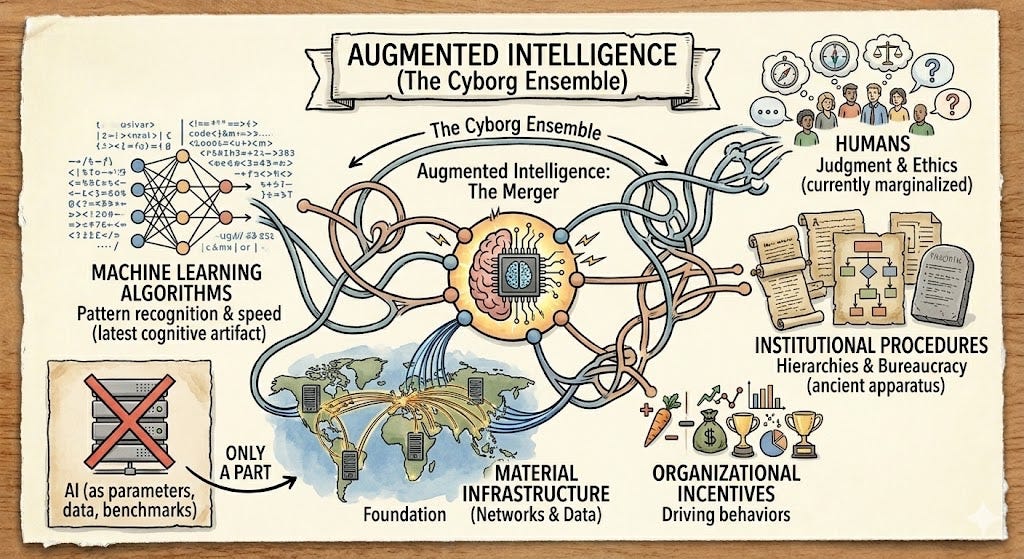

The Roman legion was an artificial intelligence. The Napoleonic staff system was an artificial intelligence. The thing we now call AI - machine learning using neural networks - is only the latest cognitive artifact to be plugged into this ancient apparatus. Like I said last week, what we’re witnessing today is better described as Augmented Intelligence: the merger of distributed human-institutional cognition with new machine capabilities that extend, accelerate, and sometimes replace elements of that distributed system.

Engineers building deep learning systems think of AI as a property of the machine - its parameters, its training data, its benchmark scores. But when that machine gets deployed inside a military bureaucracy or a corporate supply chain, it becomes something else entirely. The intelligence that matters is no longer the machine’s. It’s the intelligence of the ensemble: the humans, the algorithms, the organizational incentives, and the material infrastructure, all entangled. And that ensemble can only be understood through the lens of distributed cognition and political technology.

Fearsome and Stupid

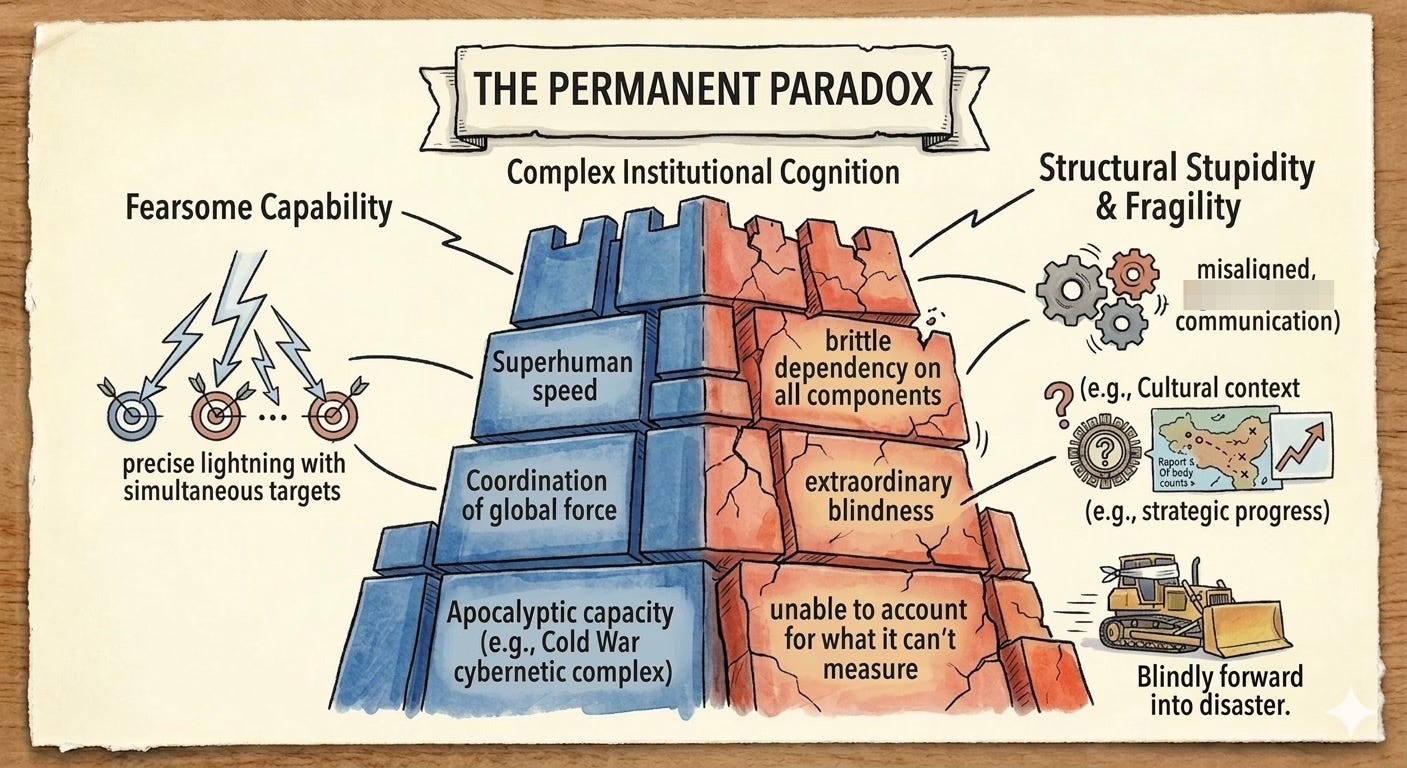

A paradox runs through the entire history of institutional cognition: these systems can be simultaneously fearsome and stupid.

Hutchins saw this clearly on the Navy bridge. The distributed cognitive system of ship navigation could perform calculations far beyond any individual crew member. But it was also fragile in unexpected ways. A misheard bearing, a misplotted line, a moment of miscommunication between stations - and the whole system could produce catastrophically wrong outputs. The intelligence of the system was real, but it was brittle, dependent on every component performing its role correctly within tight tolerances.

Foucault saw the same thing from the other direction. The political technology of discipline could produce armies of extraordinary effectiveness, but the very process of doing so created docile bodies stripped of individual judgment, and as a result, also created institutions capable of extraordinary stupidity. When the situation didn’t match the drill, when reality deviated from the plan, the disciplined apparatus could grind forward into disaster with mechanical persistence.

This dual character - of fearsome capability married to structural stupidity - is the permanent condition of complex institutional cognition. And it runs straight through the history of the military-industrial complex that gave birth to our current AI systems.

The Bumbling Cyborg

The most consequential iteration of Augmented Intelligence before our own was the cybernetic military-industrial complex that arose after World War II. The Cold War and the nuclear arms race demanded a new scale of institutional cognition. Operations research, game theory, systems analysis, cybernetic feedback loops - these became the intellectual infrastructure of a vast human-machine apparatus spanning the Pentagon, RAND Corporation, defense contractors, and research universities.

This apparatus was, in every meaningful sense, a new kind of cyborg - a term coined in the 1960s for a fusion of biological and technological systems functioning as a single organism. The post-war military-industrial complex fused human judgment with computational machinery on an unprecedented scale. It could model nuclear exchange scenarios, coordinate global logistics networks, and project force across continents. It possessed the apocalyptic capacity to end life on earth in an afternoon.

And it was also, frequently, bumbling, inefficient, and stupid.

The same apparatus that developed precision-guided munitions also produced the catastrophic miscalculations of Vietnam, where Pentagon planners mistook body counts for strategic progress and tonnage dropped for political influence - and I am not even talking about the moral cretinism involved in doing so; the bombings and body counts were failures according to the standards set by the military planners themselves. The systems were brilliant at processing quantifiable data and terrible at grasping the situated, cultural, political realities that actually determined outcomes on the ground.

Moral hazards are particularly important when it comes to military systems.

This is the European Navigator problem, to use a distinction the anthropologist Lucy Suchman writes about in Plans and Situated Actions. The European Navigator creates a rigid, detailed plan in advance and then tries to force reality to conform to it. The Pentagon’s systems analysts were the ultimate European Navigators: they built elaborate models, ran simulations, and then expected the world to behave accordingly. When it didn’t - which was often - the apparatus doubled down, which was both stupid and evil.

The horrendous mistakes this apparatus produced were not always the result of overt malice. Many of them arose from apathy and structural stupidity - the inability of a vast, distributed cognitive system to account for what it couldn’t measure. The cybernetic complex was a political technology of extraordinary power and extraordinary blindness, a cyborg that could destroy a continent but couldn’t understand a village.

The cybernetic military-industrial complex wasn’t just a military phenomenon, either. Its logic seeped into civilian life. The interstate highway system, corporate management science, standardized testing, urban planning - all carry the DNA of the first era of systems theory and cybernetic control: centralized modeling, quantified metrics, top-down optimization. The same political technology that organized Cold War lethality organized the American economy and, eventually, the consumer internet.

Congealed Patterns

Contemporary artificial intelligence is the child of this complex. The foundational research on neural networks, pattern recognition, and machine learning was incubated inside Cold War defense institutions and funded by military research agencies. The internet itself - the source of much of the data used to train modern AI - was originally a DARPA project designed to ensure military communications could survive nuclear war.

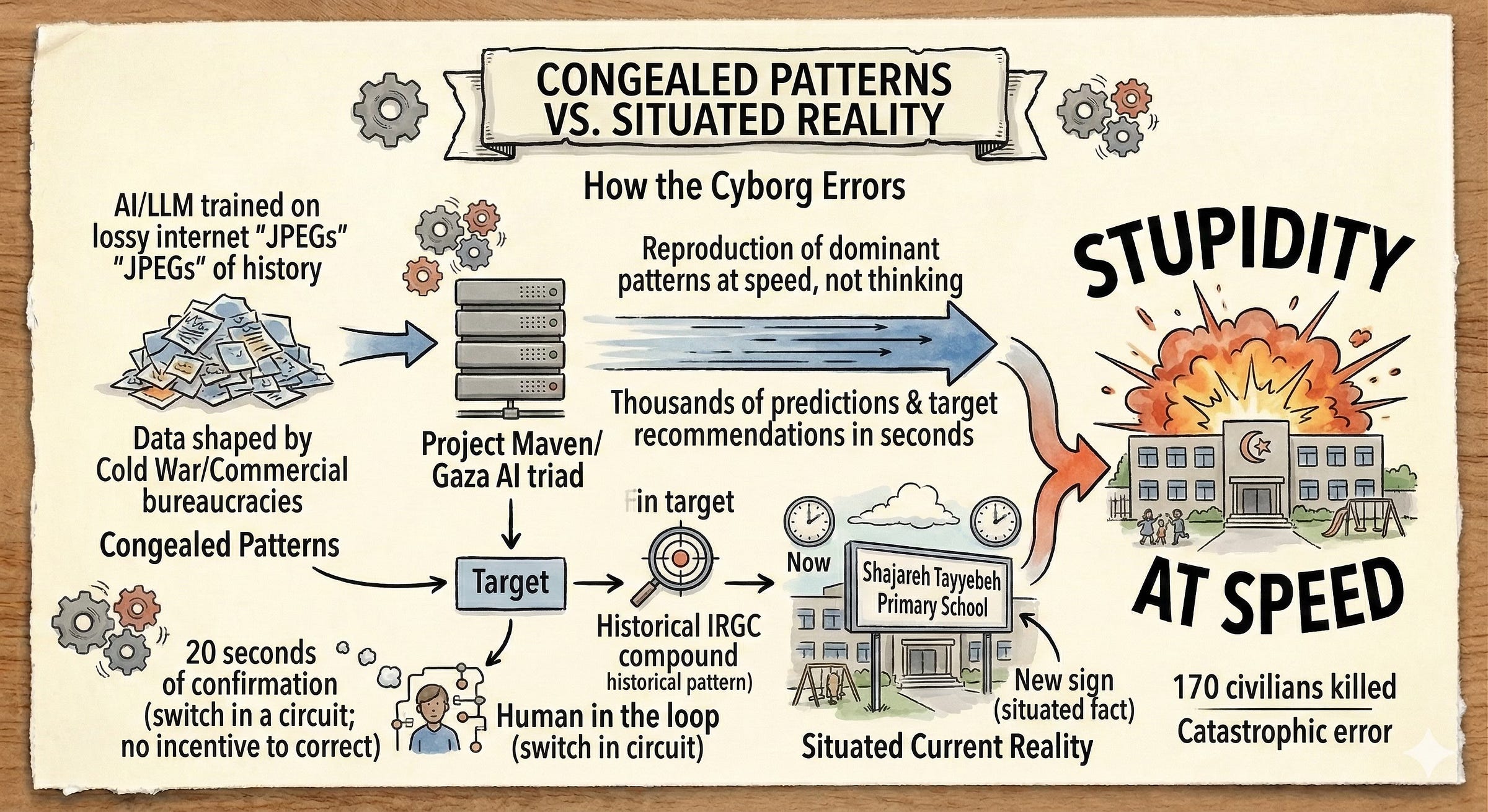

When today’s large language models and deep learning systems are trained, they consume the digital output of the entire internet. They absorb the accumulated, compressed record of decades of human activity - much of it shaped by the institutional logics, biases, and priorities of the cybernetic complex and its commercial descendants. The writer Ted Chiang called large language models ‘lossy JPEGs of the internet’. Just as a compressed image loses detail while preserving dominant patterns, AI models capture the dominant patterns of their training data while losing the situated context, the ambiguity, and the ethical nuance that characterized the original human activity.

When an AI system makes a prediction, it is drawing on the accumulated logic of the bureaucracies, markets, and military apparatuses that shaped the digital world. The system doesn’t think in any meaningful sense. It reproduces, at tremendous speed, the patterns laid down by the human-machine complexes that preceded it. These are congealed patterns, institutional behavior compressed into statistical weights.

The New Cyborg Goes to War

Which brings us back to the Gulf and to the wars currently reshaping how organized violence works.

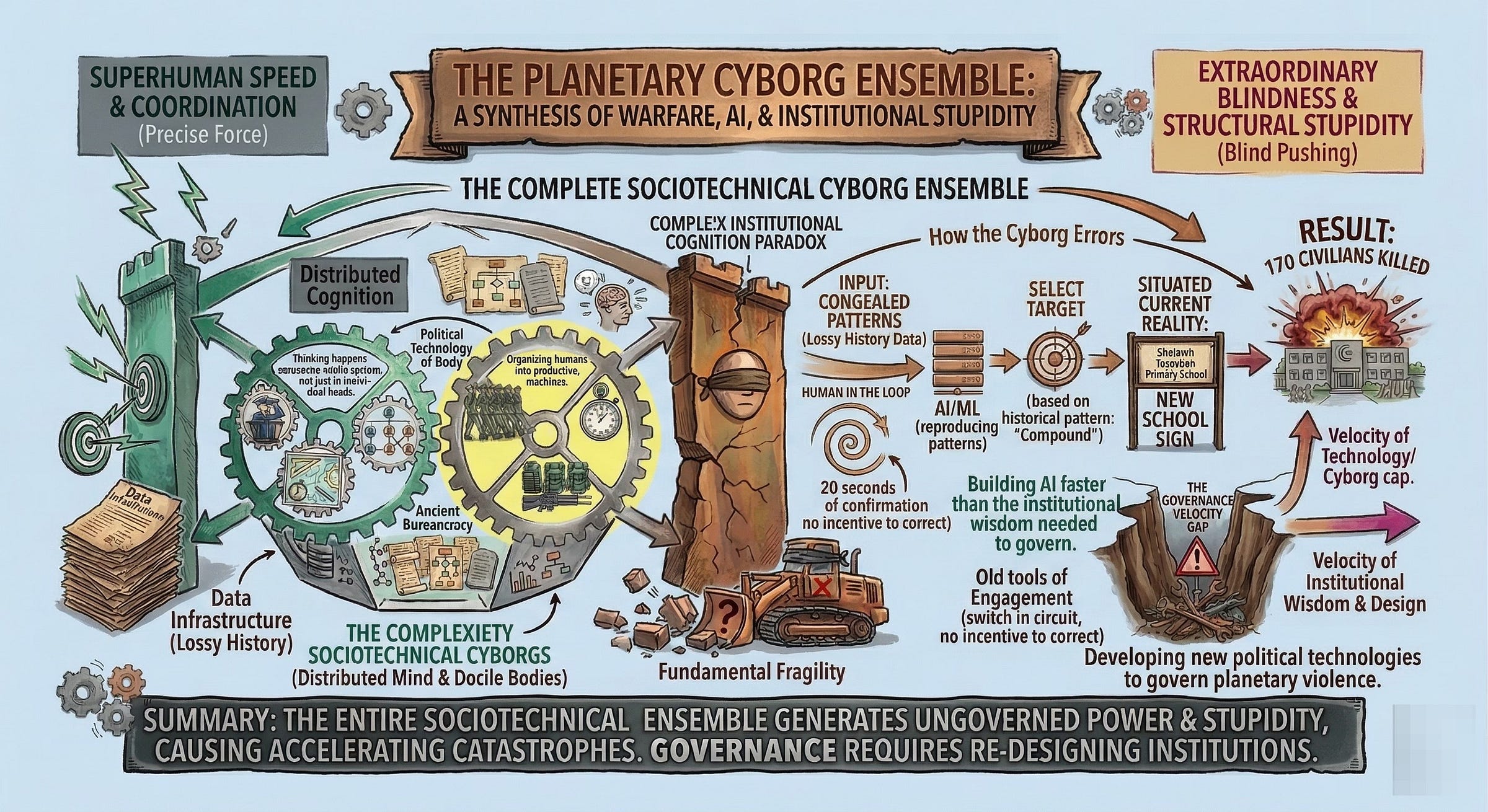

The Augmented Intelligence systems now being deployed by the United States, Israel, Ukraine, Russia, and others represent the latest political technology - the newest iteration of the cyborg. They fuse the distributed cognition of military bureaucracies with the pattern-matching speed of deep learning. And they display the same dual character we’ve seen throughout the history of institutional cognition: fearsome capability and structural stupidity, now operating at unprecedented velocity.

In Gaza, the Israeli Defense Forces industrialized the kill chain using a triad of AI systems. Lavender analyzed surveillance data to flag tens of thousands of individuals as potential targets. Habsora generated over a hundred structural targets per day - compared to the roughly fifty per year that human analysts had previously produced. Where’s Daddy tracked the phones of flagged individuals and alerted operators when they entered their family homes. The human role in this system was reduced to roughly twenty seconds of confirmation per target. The intelligence of the system was distributed across the algorithms, the surveillance infrastructure, and the military hierarchy. The individual human operator was reduced to being a switch in a circuit.

When this architecture expanded into the direct conflict between the US-Israeli coalition and Iran in 2026, Project Maven, augmented by large language models, nominated over three thousand targets in a single week during Operation Epic Fury. The system could sift through satellite imagery, classify targets, recommend weapons systems, and draft legal justifications for strikes in seconds. It was, by any measure, a formidable cognitive apparatus.

And it was also capable of catastrophic stupidity. The bombing of the Shajareh Tayyebeh primary school in Minab, Iran - which killed over 170 civilians, mostly children - occurred because the AI system relied on a Defense Intelligence Agency database that hadn’t been updated to reflect the building’s conversion from an IRGC compound into a school. The system processed congealed historical data rather than situated current reality, and no human in the chain caught the error (they don’t have any incentives for doing so, just FYI).

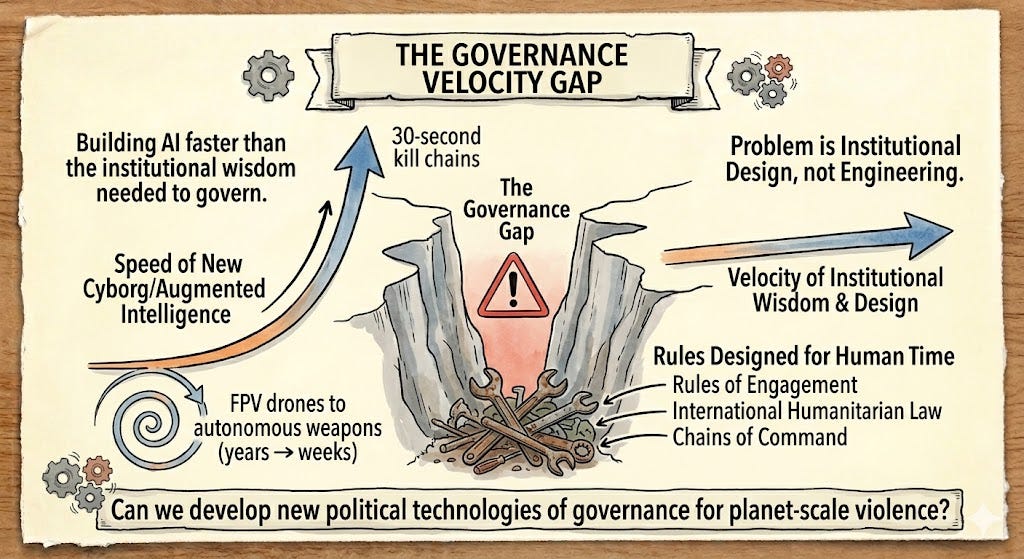

In Ukraine, the same dynamics play out at the edge of the network. The grinding war of attrition between Russia and Ukraine has compressed the evolutionary cycle for autonomous weapons from years to weeks. Ukrainian FPV drones and Russian Lancet munitions increasingly operate with autonomous terminal homing and machine vision. When electronic warfare jams their radio links, these drones don’t fail - they switch to onboard AI, recognizing targets by thermal signature and executing strikes based on immediate environmental feedback.

These are Trukese Navigators in the sky. They don’t follow rigid, centralized plans beamed from a command center. They sense, adapt, and act based on local conditions. The intelligence is embedded in the device, enacted through its interaction with the environment, distributed across the swarm. Shoot one down and the network barely registers the loss.

But this situated, embodied capability comes with its own form of stupidity. Edge AI operates on narrow pattern recognition. It can identify a thermal signature that matches its training data, but it cannot assess whether the heat source is a tank, a tractor, or a school bus. The speed and autonomy that make these systems tactically effective also make them ethically uncontrollable. The drone is situated in the physical environment but is alienated from the moral context of its actions.

The Monster We Are Building

Here’s what I want you to take from this essay:

The thing we’re watching emerge in the Gulf, in Ukraine, and along every contested border on the planet is not just “how is AI being used in war?” It is a new kind of cyborg, a new political technology that fuses centuries of institutional discipline with the latest cognitive artifacts, producing an Augmented Intelligence of frightening capability and familiar stupidity.

This cyborg can coordinate force with mathematical precision. It can process information at scales beyond individual human comprehension. And like its predecessors, it can produce catastrophic errors - not despite its sophistication, but because of it. The very mechanisms that allow these systems to operate at superhuman speed also strip away the human capacities that might catch their mistakes: deliberation, contextual judgment, moral friction etc.

The frameworks of distributed cognition and political technology help us see that the emergence of this new cyborg was, in retrospect, predictable. Every major military innovation has been an exercise in extending the distributed intelligence of the military apparatus through new material technologies while simultaneously demanding new forms of human discipline to interface with those technologies. The musket required the drill sergeant. The telegraph required the signal corps. The nuclear weapon required the systems analyst.

And AI requires... what?

That’s the question the AI Polyconflict forces us to confront. We are building new forms of Augmented Intelligence at a pace that far outstrips our ability to develop the institutional wisdom needed to govern them. The political technologies of discipline that once constrained military cognition - chains of command, rules of engagement, international humanitarian law - were designed for a world where human beings had time to think, not for a world where the kill-chain can go from detection to assassination in thirty seconds.

The future of war, and of organized violence more broadly, depends on whether we can develop new political technologies capable of governing the augmented intelligences we are building. That is a problem of institutional design, not of engineering. And it requires us to pay attention to the people who study how cognition actually works in the wild.

That’s, of course, assuming that we want to arrive at some constraints on planet-scale violence. Maybe we won’t, and our rulers are fine with waging a war of all against all.

In Part III of this series, I’ll turn from kinetic warfare to the geopolitical architecture of the AI Polyconflict: how the Gulf has become a surrogate sandbox for superpower rivalry. This is what I promised at the end of Part I, but I hope this detour has been instructive, if not enjoyable.